Peak Google? Not Even Close

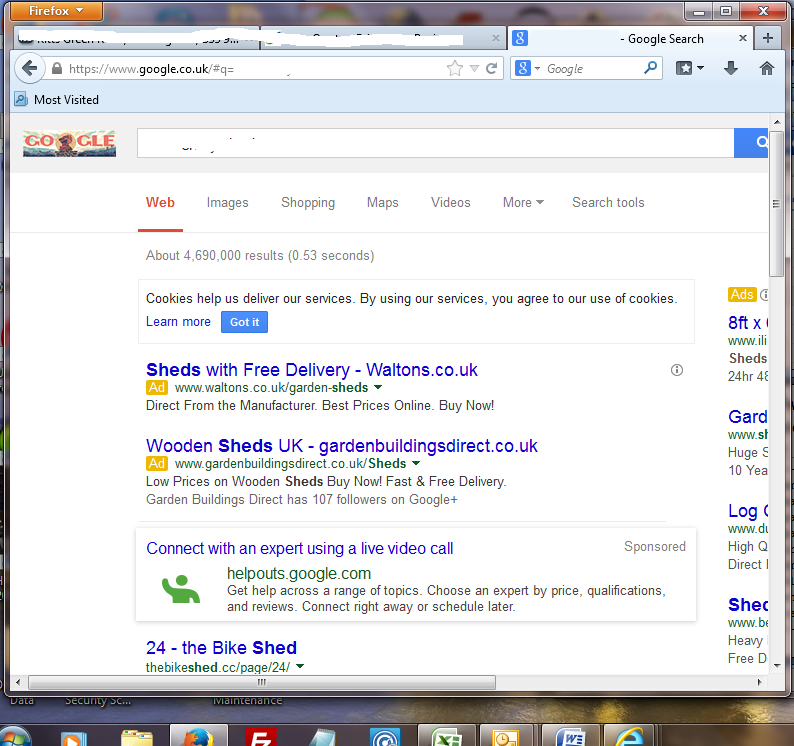

Search vs Native Ads

Google owns search, but are they a one trick pony?

A couple weeks ago Ben Thompson published an interesting article suggesting Google may follow IBM and Microsoft in peaking, perhaps with native ads becoming more dominant than online search ads.

According to Forrester, in a couple years digital ad spend will overtake TV ad spend. In spite of the rise of sponsored content, native isn’t even broken out as a category.

Part of the issue with native advertising is it can be blurry to break out some of it. Some of it is obvious, but falls into multiple categories, like video ads on YouTube. Some of it is obvious, but relatively new & thus lacking in scale. Amazon is extending their payment services & Prime shipping deals to third party sites of brands like AllSaints & listing inventory from those sites on Amazon.com, selling them traffic on a CPC basis. Does that count as native advertising? What about a ticket broker or hotel booking site syndicating their inventory to a meta search site?

And while native is not broken out, Google already offers native ad management features in DoubleClick and has partnered with some of the more well known players like BuzzFeed.

The Penny Gap’s Impact on Search

Time intends to test paywalls on all of its major titles next year & they are working with third parties to integrate affiliate ads on sites like People.com.

The second link in the above sentence goes to an article which is behind a paywall. On Twitter I often link to WSJ articles which are behind a paywall. Any important information behind a paywall may quickly spread beyond it, but typically a competing free site which (re)reports on whatever is behind the paywall is shared more, spreads further on social, generates more additional coverage on forums and discussion sites like Hacker News, gets highlighted on aggregators like TechMeme, gets more links, ranks higher, and becomes the default/canonical source of the story.

Part of the rub of the penny gap is the cost of the friction vastly exceeds the financial cost. Those who can flow attention around the payment can typically make more by tracking and monetizing user behavior than they could by charging users incrementally a cent here and a nickel there.

Well known franchises are forced to offer a free version or they eventually cede their market position.

There are sites which do roll up subscriptions to a variety of sites at once, but some of them which had stub articles requiring payment to access like Highbeam Research got torched by Panda. If the barrier to entry to get to the content is too high the engagement metrics are likely to be terrible & a penalty ensues. Even a general registration wall is too high of a barrier to entry for some sites. Google demands whatever content is shown to them be visible to end users & if there is a miss match that is considered cloaking – unless the miss match is due to monetizing by using Google’s content locking consumer surveys.

Who gets to the scale needed to have enough consumer demand to be able to charge an ongoing subscription for access to a variety of third party content? There are a handful of players in music (Apple, Spotify, Pandora, etc) & a handful of players in video (Netflix, Hulu, Amazon Prime), but outside of those most paid subscription sites are about finance or niche topics with small scale. And whatever goes behind the paywalls gets seen by almost nobody when compared against to the broader public market at the free pricepoint.

Even if you are in a broad niche industry where a subscription-based model works, it still may be brutally tough to compete against Google. Google’s chief business officer joined the board of Spotify, which means Spotify should be safe from Google risk, except…

- In spite of billions of dollars of aggregate royalty payouts by Spotify, Taylor Swift pulled her catalog from Spotify

- shortly after Taylor Swift pulled her catalog from Spotify, YouTube announced their subscription service, which will include Taylor Swift’s catalog & will offer a free 6-month trial

Google’s Impact on Premium Content

I’ve long argued Google has leveraged piracy to secure favorable media deals (see the second bullet point at the bottom of this infographic). Some might have perceived my take as being cynical, but when Google mentioned their “continued progress on fighting piracy” the first thing they mentioned was more ad units.

There are free options, paid options & the blurry lines in between which Google & YouTube ride while they experiment with business models and monetize the flow of traffic to the paid options.

“Tech companies don’t believe in the unique value of premium content over the long term.” – Jessica Lessin

There is a massive misalignment of values which causes many players to have to refine their strategy over and over again. The gray area is never static.

Many businesses only have a 10% or 15% profit margin. An online publishing company which sees 20% of its traffic disappear might thus go from sustainable to bleeding cash overnight. A company which can arbitrarily shift / redirect a large amount of traffic online might describe itself as a “kingmaker.”

In Germany some publishers wanted to be paid to be in the Google index. As a result Google stopped showing snippets near their listings. Google also refined their news search integration into the regular search results to include a broader selection of sources including social sites like Reddit. As a result Axel Springer quickly found itself begging for things to go back to the way they were before as their Google search traffic declined 40% and their Google News traffic declined 80%. Axel Springer got their descriptions back, but the “in the news” change remains.

Google’s Impact on Weaker Players

If Google could have that dramatic of an impact on Axel Springer, imagine what sort of influence they have on millions of other smaller and weaker online businesses.

One of the craziest examples is Demand Media.

Demand Media’s market cap peaked above $1.9 billion. They spun out the domain name portion of the business into a company named Rightside Group, but the content portion of the business is valued at essentially nothing. They have about $40 million in cash & equivalents. Earlier this year they acquired Saatchi Art for $17 million & last year they acquired ecommerce marketplace Society6 for $94 million. After their last quarterly result their stock closed down 16.83% & Thursday they were down another 6.32%, given them a market capitalization of $102 million.

On their most recent conference call, here are some of the things Demand Media executives stated:

- By the end of 2014, we anticipate more than 50.000 articles will be substantially improved by rewrites made rich with great visuals.

- We are well underway with this push for quality and will remove $1.8 million underperforming articles in Q4

- as we strive to create the best experience we can we have removed two ad units with the third unit to be removed completely by January 1st

- (on the above 2 changes) These changes are expected to have a negative impact on revenues and adjusted EBITDA of approximately $15 million on an annualized basis.

- Through Q3 we have invested $1.1 million in content renovation costs and expect approximately another $1 million in Q4 and $2 million to $4 million in the first half of next year.

- if you look at visits or you know the mobile mix is growing which has lower CPM associated with it and then also on desktop we’re seeing compression on the pricing line as well.

- we know that sites that have ad density that’s too high, not only are they offending audiences in near term, you are penalized with respect to where you show up in search indexes as well.

Google torched eHow in April of 2011. In spite of over 3 years of pain, Demand Media is still letting Google drive their strategy, in some cases spending millions of dollars to undo past investments.

Yet when you look at Google’s search results page, they are doing the opposite of the above strategy: more scraping of third party content coupled with more & larger ad units.

“@CyrusShepard: Google’s got you covered: pic.twitter.com/ZU2AOl5EGn” Google copies entire web page?— john andrews (@searchsleuth999) October 30, 2014

Originally the source links in the scrape-n-displace program were light gray. They only turned blue after a journalist started working on a story about 10 blue links.

The Blend

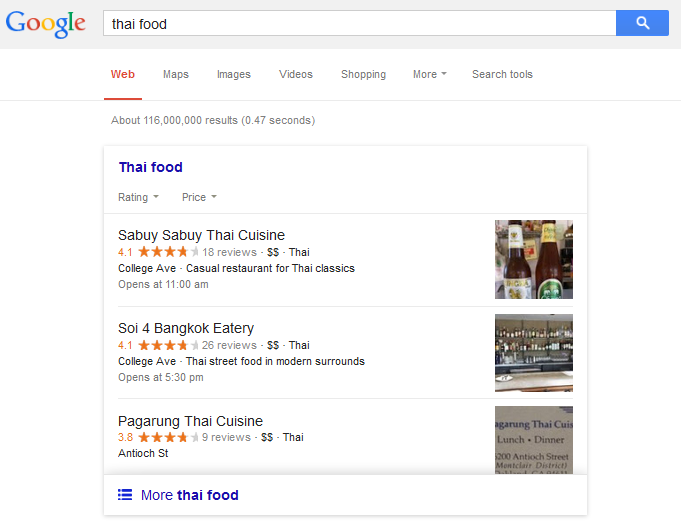

The search results can be designed to have some aspects blend in while others stand out. Colors can change periodically to alter response rates in desirable ways.

The banner ad got a bad rap as publishers have fought declining CPMs by adding more advertisements to their pages. When it works, Google’s infrastructure still delivers (and tracks) billions of daily banner ads.

Search ads have never had the performance decline banner ads have.

The closest thing Google ever faced on that front was when AdBlock Plus became popular. Since it was blocking search ads, Google banned them & then restored them as they eventually negotiated a deal to pay them to display ads on Google while they continued to block ads on other third party sites.

Search itself *is* the ultimate native advertising platform.

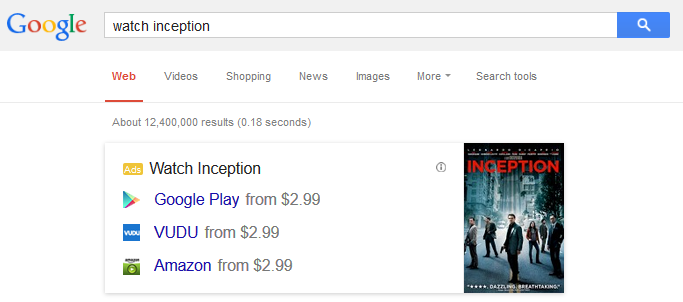

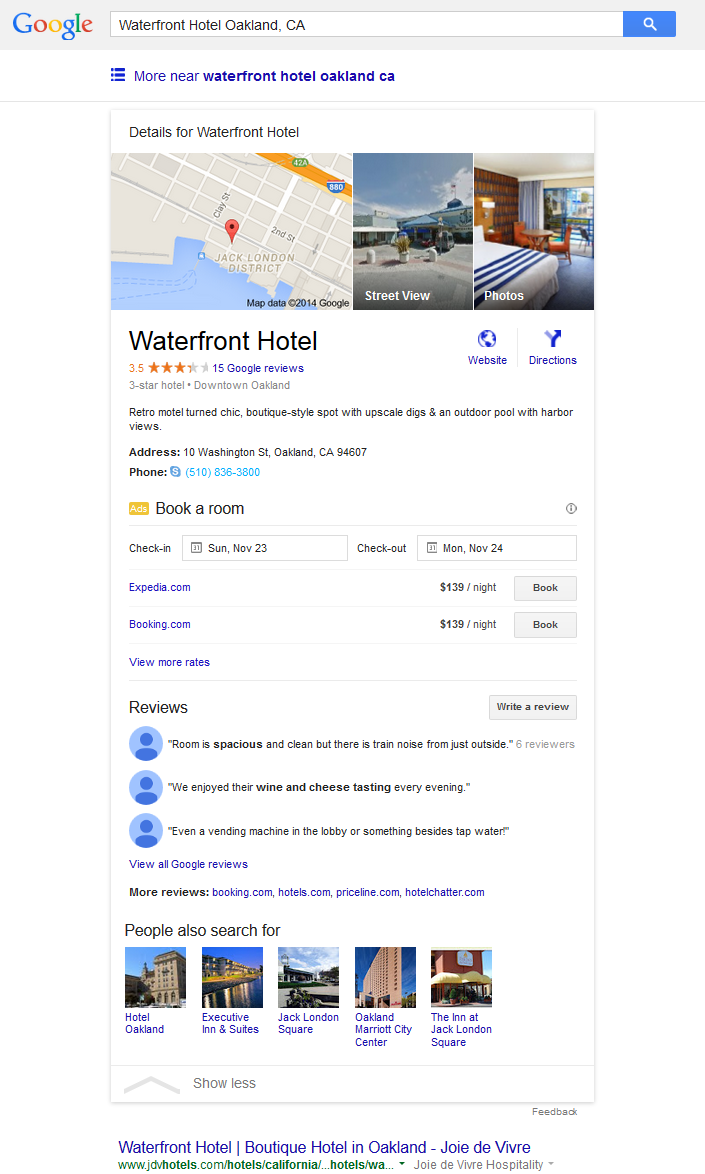

Google is doing away with the local carousel in favor of a 3 pack local listing in categories like hotels. Once a person clicks on one of the hotel listings, Google then inserts an inline knowledge graph listing for that hotel with booking affiliate links inline in the search results, displacing the organic result set below the fold.

Notice in the above graphic how the “website” link uses generic text, is aligned toward the right, and is right next to an image so that it looks like an ad. It is engineered to feel like an ad and be ignored. The actual ads are left aligned and look like regular text links. They have an ad label, but that label is a couple lines up from them & there are multiple horizontal lines between the label and the actual ad units.

Not only does Google have the ability to shift the layout in such a drastic format, but then with whatever remains they also get to determine who they act against & who they don’t. While the SEO industry debates the “ethics” of various marketing techniques Google has their eye on the prize & is displacing the entire ecosystem wholesale.

Users were generally unable to distinguish between ads and organic listings *before* Google started mixing the two in their knowledge graph. That is a big part of the reason search ads have never seen the engagement declines banner ads have seen.

Mobile has been redesigned with the same thinking in mind. Google action items (which can eventually be monetized) up top & everything else pushed down the page.

The blurring of “knowledge” and ads allows Google to test entering category after category (like doctor calls from the search results) & forcing advertisers to pay for the various tests while Google collects data.

And as Google is scraping information from third party sites, they can choose to show less information on their own site if doing so creates additional monetization opportunities. As far back as 2009 Google stripped phone numbers off of non-sponsored map listings. And what happened with the recent 3 pack? While 100% of the above the fold results are monetized, …

“Go back to an original search that turns up the 3 PAC. Its completely devoid of logical information that a searcher would want:

- No phone number

- No address

- No map

- NO LINK to the restaurant website.

Anything that most users would want is deliberately hidden and/or requires more clicks.” – Dave Oremland

Google justifies their scrape-n-displace programs by claiming they are put users first. Then they hide some of the information to drive incremental monetization opportunities. Google may eventually re-add some of those basic features which are now hidden, but as part of sponsored local listings.

After all – ads are the solution to everything.

Do branded banner ads in the search results have a low response rate? Or are advertisers unwilling to pay a significant sum for them? If so, Google can end the test and instead shift to include a product carousel in the search results, driving traffic to Google Shopping.

“I see this as yet another money grab by Google. Our clients typically pay 400-500% more for PLA clicks than for clicks on their PPC Brand ads. We will implement exact match brand negatives in Shopping campaigns.” – Barb Young

That money grab stuff has virtually no limit.

The Click Casino

Off the start keywords defaulted to broad match. Then campaigns went “enhanced” so advertisers were forced to eat lower quality clicks on mobile devices.

Then there was the blurring exact match targeting into something else, forcing advertisers to buy lower quality variations of searches & making them add tons of negative keywords (and keep eating new garbage re-interpretations of words) in order to run a fine tuned campaign specifically targeted against a term.

In the past some PPC folks cheered obfuscation of organic search, thinking “this will never happen to me.”

Oops.

And of course Google not only wants to be the ad auction, but they want to be your SEM platform managing your spend & they are suggesting you can leverage the “power” of automated auction time biding.

Advertisers RAVE about the success of Google’s automatic bidding features: “It received one click. That click cost $362.63.”

The only thing better than that is banner ads in free mobile tap games targeted at children.

Adding Friction

Above I mentioned how Google arbitrarily banned the AdBlock Plus extension from the Play store. They also repeatedly banned Disconnect Mobile. If you depend on mobile phones for distribution it is hard to get around Google. What’s more they also collect performance data, internally launch competing apps, and invest in third party apps. And they control the prices various apps pay for advertising across their broad network.

So maybe you say “ok, I’ll skip search & mobile, I’ll try to leverage email” but this gets back to the same issue again. In Gmail social or promotional emails get quarantined into a ghetto where they are rarely seen:

“online stores, if they get big enough, can act as chokepoints. And so can Google. … Google unilaterally misfiled my daily blog into the promotions folder they created, and I have no recourse and no way (other than this post) to explain the error to them” – Seth Godin

Those friction adders have real world consequences. A year ago Groupon blamed Gmail’s tabs for causing them to have poor quarterly results. The filtering impact on a start up can be even more extreme. A small shift in exposure can lower the K factor to something below 1 & require the startups to buy exposure rather than generating it virally.

In addition to those other tabs, there are a host of other risks like being labeled as spam or having a security warning. Few sites promote Google’s view as often as people like Greg Sterling do on Search Engine Land, yet even their newsletter was showing a warning in Gmail.

Google can also add friction to

- websites using search rankings, vertical search result displacement, hiding local business information (as referenced above), search query suggestions, and/or leveraging their web browser to redirect consumer intent

- video on YouTube by counting ad views as organic views, changing the relevancy metrics, and investing in competing channels & giving them preferential exposure as part of the deal. YouTube gets over half their views on mobile devices with small screens, so any shift on Google’s rank preference is going to have a major shift in click distributions.

- mobile apps using default bundling agreements which require manufactures to set Google’s apps as defaults

- other business models by banning bundling-based business models too similar to their own (bundling is wrong UNLESS it is Google doing it)

- etc.

The launch of Keyword (not provided) which hid organic search keyword data was friction for the sake of it in organic search. When Google announced HTTPS as a ranking signal, Netmeg wrote: “It’s about ad targeting, and who gets to profile you, and who doesn’t. Mark my words.”

Facebook announced their relaunch of Atlas and Google immediately started cracking down on data access:

In the conversations this week, with companies like Krux, BlueKai and Lotame, Google told data management platform players that they could not use pixels in certain ads. The pixels—embedded within digital ads—help marketers target and understand how many times a given user has seen their messages online.

“Google is only allowing data management platforms to fire pixels on creative assets that they’re serving, on impressions they bought, through the Google Display Network,” said Mike Moreau, chief solutions officer at Krux. “So they’re starting with a very narrow scope.”

Around the same time Google was cracking down on data sharing, they began offering features targeting consumers across devices & announced custom affinity audiences which allow advertisers to target audiences who visit any particular website.

Google’s special role is not only as an organizer (and obfuscate) of information, but then they get to be the source measuromg how well marketing works via their analytics, which can regularly launch new reports which may causually over-represent their own contribution while under-representing some other channels, profiting from activity bias. The industry default of last click attribution driving search ad spending is one of the key issues which has driven down display ad values over the years.

Investing in Competition

Google not only ranks the ecosystem, but they actively invest in it.

Google tried to buy Yelp. When Facebook took off Google invested in Zynga to get access to data, in spite of a sketchy background. When Google’s $6 billion offer for Groupon didn’t close the deal, Google quickly partnered with over a dozen Groupon competitors & created new offer ad units in the search results.

Inside of the YouTube ecosystem Google also holds equity stakes in leading publishers like Machinima and Vevo.

There have been a few examples of investments getting special treatment, getting benefit of the doubt, or access to non-public information.

The scary scenario for publishers might sound something like this: “in Baidu Maps you can find a hotel, check room availability, and make a booking, all inside the app.” There’s no need to leave the search engine.

Take a closer look & that scary version might already be here. Google’s same day delivery boss moved to Uber and Google added Uber pickups and price estimates to their mobile Maps app.

Google, of course, also invested in Uber. It would be hard to argue that Uber is anything but successful. Though it is also worth mentioning winning at any cost often creates losses elsewhere:

- their insurance situation sounds fuzzy

- the founder doesn’t sound like a nice guy

- they’ve repeatedly engaged in shady endeavors like flooding a competing network with bogus requests & trying to screw with a competitor’s ability to raise funds

- as subprime auto loans have become widespread, Uber has pushed an extreme version of them onto their drivers while cutting their rates – an echo of the utopia of years gone by.

Google invests in disruption as though disruption is its own virtue & they leverage their internal data to drive the investments:

“If you can’t measure and quantify it, how can you hope to start working on a solution?” said Bill Maris, managing partner of Google Ventures. “We have access to the world’s largest data sets you can imagine, our cloud computer infrastructure is the biggest ever. It would be foolish to just go out and make gut investments.”

Combining usage data from their search engine, web browser, app store & mobile OS gives them unparalleled insights into almost any business.

Google is one of the few companies which can make multi-billion dollar investments in low margin areas, just for the data:

Google executives are prodding their engineers to make its public cloud as fast and powerful as the data centers that run its own apps. That push, along with other sales and technology initiatives, aren’t just about grabbing a share of growing cloud revenue. Executives increasingly believe such services could give Google insights about what to build next, what companies to buy and other consumer preferences

Google committed to spending as much as a half billion dollars promoting their shopping express delivery service.

Google’s fiber push now includes offering business internet services. Elon Musk is looking into offering satellite internet services – with an ex-Googler.

The End Game

Google now spends more than any other company on federal lobbying in the US. A steady stream of Google executives have filled US government rolls like deputy chief technology officer, chief technology officer, and head of the patent and trademark office. A Google software engineer went so far as suggesting President Obama

- Retire all government employees with full pensions.

- Transfer administrative authority to the tech industry.

- Appoint Eric Schmidt CEO of America.

That Googler may be crazy or a troll, but even if we don’t get their nightmare scenario, if the regulators come from a particular company, that company is unlikely to end up hurt by regulations.

President Obama has stated the importance of an open internet: “We cannot allow Internet service providers to restrict the best access or to pick winners and losers in the online marketplace for services and ideas.”

If there are relevant complaints about Google, who will hear them when Googlers head key government roles?

Larry Page was recently labeled businessperson of the year by Fortune:

It’s a powerful example of how Page pushes the world around him into his vision of the future. “The breadth of things that he is taking on is staggering,” says Ben Horowitz, of Andreessen Horowitz. “We have not seen that kind of business leader since Thomas Edison at GE or David Packard at HP.”

A recent interview of Larry Page in the Financial Times echos the theme of limitless ambition:

- “the world’s most powerful internet company is ready to trade the cash from its search engine monopoly for a slice of the next century’s technological bonanza.” … “As Page sees it, it all comes down to ambition – a commodity of which the world simply doesn’t have a large enough supply.”

- “I think people see the disruption but they don’t really see the positive,” says Page. “They don’t see it as a life-changing kind of thing . . . I think the problem has been people don’t feel they are participating in it.”

- “Even if there’s going to be a disruption on people’s jobs, in the short term that’s likely to be made up by the decreasing cost of things we need, which I think is really important and not being talked about.”

- “in a capitalist system, he suggests, the elimination of inefficiency through technology has to be pursued to its logical conclusion.”

There are some dark layers which are apparently “incidental side effects” of the techno-utopian desires.

Mental flaws could be reinforced & monetized by hooking people on prescription pharmaceuticals:

It takes very little imagination to foresee how the kitchen mood wall could lead to advertisements for antidepressants that follow you around the Web, or trigger an alert to your employer, or show up on your Facebook page because, according to Robert Scoble and Shel Israel in Age of Context: Mobile, Sensors, Data and the Future of Privacy, Facebook “wants to build a system that anticipates your needs.”

Or perhaps…

Those business savings are crucial to Rifkin’s vision of the Third Industrial Revolution, not simply because they have the potential to bring down the price of consumer goods, but because, for the first time, a central tenet of capitalism—that increased productivity requires increased human labor—will no longer hold. And once productivity is unmoored from labor, he argues, capitalism will not be able to support itself, either ideologically or practically.

That is not to say “all will fail” due to technology. Some will succeed wildly.

Michelle Phan has been able to leverage her popularity on YouTube to launch a makeup subscription service which is at an $84 million per year revenue run rate.

Those at the top of the hierarchy will get an additional boost. Such edge case success stories will be marketed offline to pull more people onto the platform.

Google is promoting Japanese Youtube creators’ personal brands in heavy TV, online, and print ads. Trying to recruit more maybe?— Patrick McKenzie (@patio11) November 1, 2014

While a “star based” compensation system makes a few people well off, most people publishing on those platforms won’t see any financial benefit from their efforts. Worse yet, a lot of the “viral” success stories are driven by a large ad budget.

Category after category gets commoditized, platform after platform gets funded by Google, and ultimately employees working on them will long for the days where their wages were held down by illegal collusion rather than the crowdsourcing fate they face:

Workers, in turn, have more mobility and a semblance of greater control over their working lives. But is any of it worth it when we can’t afford health insurance or don’t know how much the next gig might pay, or when it might come? When an app’s terms of service agreement is the closest thing we have to an employment contract? When work orders come through a smartphone and we know that if we don’t respond immediately, we might not get such an opportunity again? When we can’t even talk to another human being about the task at hand and we must work nonstop just to make minimum wage?

Just as people get commoditized, so do other layers of value:

- those who failed to see the value of & invest in domain names cheered as names lost value

- Google philosophically does not believe in customer service, but they feel they should grade others on their customer service and remove businesses which don’t respond well to culturally unaware outsourced third world labor.

- Google gets people to upload pirate content to YouTube & then uses the existence of the piracy to sell ads. They can also use YouTube to report on the quality of various ISPs, while also blocking competing search engines and apps from being able to access YouTube.

- PageRank extracts the value of editorial votes, allowing Google to leverage the work of editors to refine their search rankings without requiring people to visit the sites. Mix in the couple years Google spent fearmongering about links and it is not surprising to see the Yahoo Directory shut down.

- Remember the time a Google “contractor” did a manual scrape of Mocality in Kenya, then called up the businesses and lied to them to try to get them onto Google? I’d link to the original Mocality blog post, but Mocality Kenya has shut down.

- Google also scraped reviews (sometimes without attribution) & only failed at it when players large enough to be heard by Government regulators complained. This is why government scrutiny of Google is so important. It is one of the few things they actually respond to.

In SEO for a number of years many people have painted brand as the solution to everything. But consider the hotel search results which are 100% monetized above the fold – even if you have a brand, you still must pay to play. Or consider the Google Shopping ads which are now being tested on branded navigational searches.

Google even obtained a patent for targeting ads aimed at monetizing named entities.

You paid to build the brand. Then you pay Google again – “or else.”

One could choose to opt out of Google ad products so as not to pay to arbitrage themselves, but Google is willing to harm their own relevancy to extract revenues.

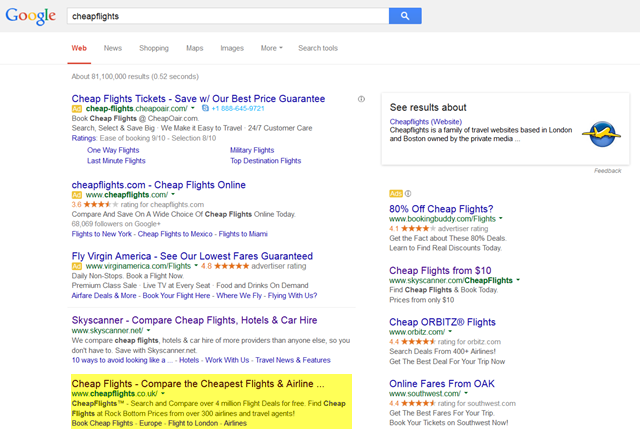

A search in the UK for the trademark term [cheapflights] is converted into the generic search [cheap flights]. The official site is ranking #2 organically and is the 20th clickable link in the left rail of the search results.

As much as brand is an asset, it also becomes a liability if you have to pay again for every time someone looks for your brand.

Mobile apps may be a way around Google, but again it is worth noting Google owns the operating system and guarantees themselves default placement across a wide array of verticals through bundling contracts with manufacturers. Another thing worth considering with mobile is new notification features tied to the operating systems are unbundling apps & Google has apps like Google Now which tie into many verticals.

As SEOs for a long time we had value in promoting the adoption of Google’s ecosystem. As Google attempts to capture more value than they create we may no longer gain by promoting the adoption of their ecosystem, but given their…

- cash hoard

- lobbyists

- ex-employees in key government rolls

- control over video, mobile, apps, maps, email, analytics (along with search)

- broad portfolio of investments

… it is hard to think they’ve come anywhere close to peaking.

How Does Yahoo! Increase Search Ad Clicks?

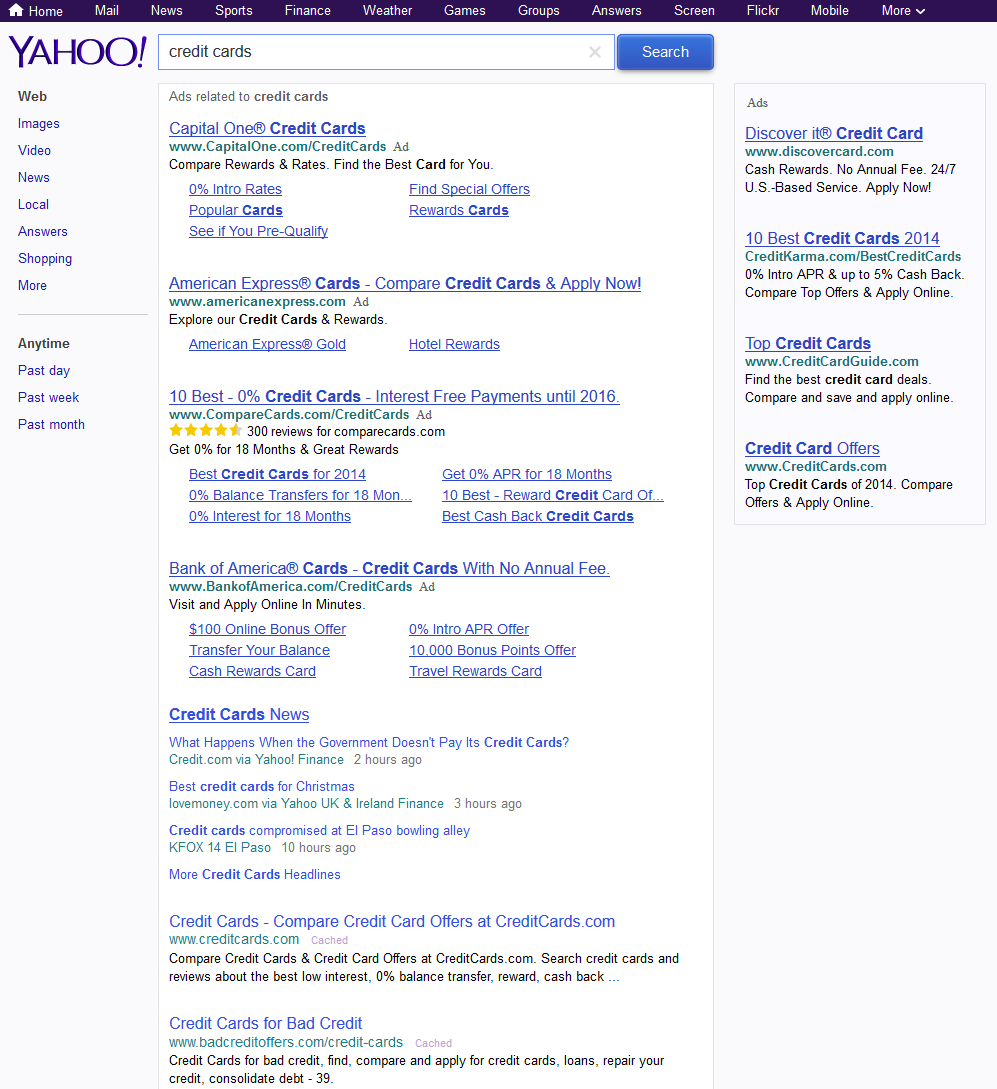

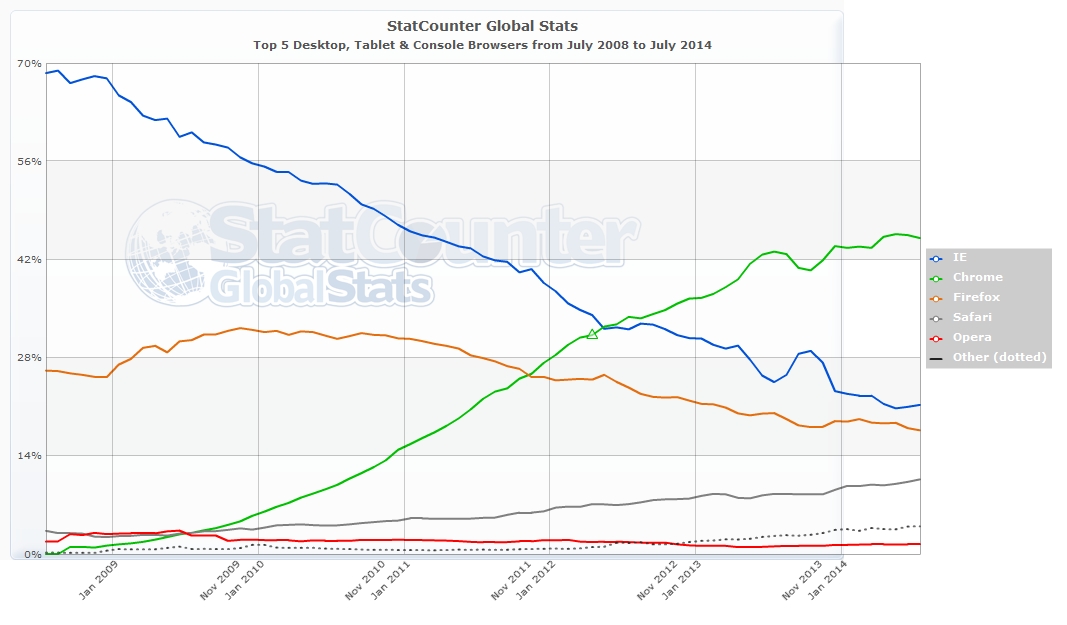

One wonders how Yahoo Search revenues keep growing even as Yahoo’s search marketshare is in perpetual decline.

Then one looks at a Yahoo SERP and quickly understands what is going on.

Here’s a Yahoo SERP test I saw this morning

I sometimes play a “spot the difference” game with my wife. She’s far better at it than I am, but even to a blind man like me there are about a half-dozen enhancements to the above search results to juice ad clicks. Some of them are hard to notice unless you interact with the page, but here’s a few of them I noticed…

| Yahoo Ads | Yahoo Organic Results | |

| Placement | top of the page | below the ads |

| Background color | none / totally blended | none |

| Ad label | small gray text to right of advertiser URL | n/a |

| Sitelinks | often 5 or 6 | usually none, unless branded query |

| Extensions | star ratings, etc. | typically none |

| Keyword bolding | on for title, description, URL & sitelinks | off |

| Underlines | ad title & sitelinks, URL on scroll over | off |

| Click target | entire background of ad area is clickable | only the listing title is clickable |

What is even more telling about how Yahoo disadvantages the organic result set is when one of their verticals is included in the result set they include the bolding which is missing from other listings. Some of their organic result sets are crazy with the amount of vertical inclusions. On a single result set I’ve seen separate “organic” inclusions for

- Yahoo News

- stories on Yahoo

- Yahoo Answers

They also have other inclusions like shopping search, local search, image search, Yahoo screen, video search, Tumblr and more.

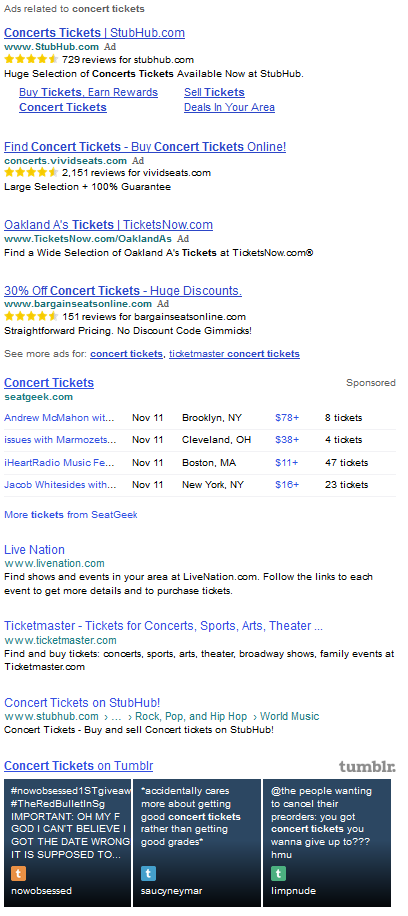

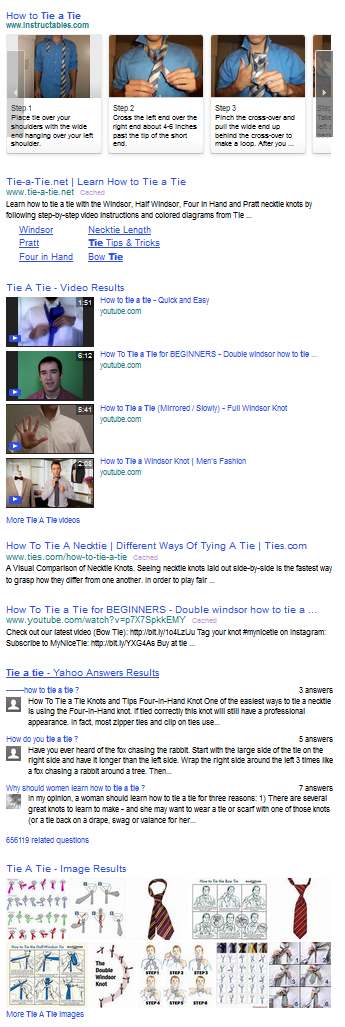

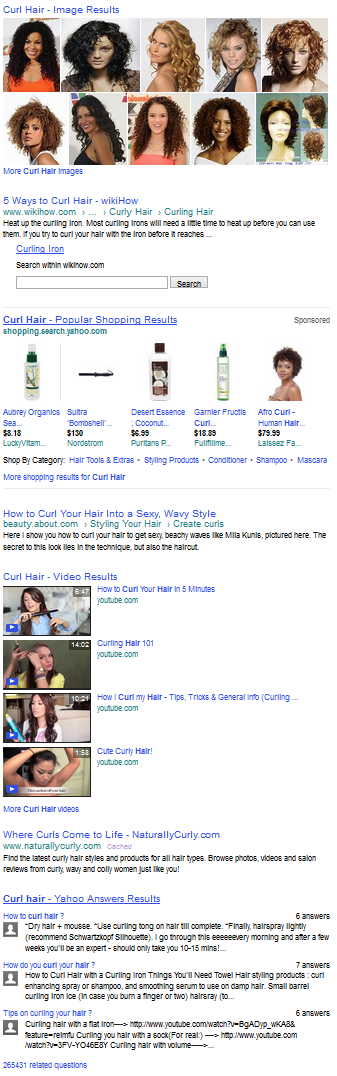

Here are a couple examples.

This one includes an extended paid affiliate listing with SeatGeek & Tumblr.

This one includes rich formatting on Instructibles and Yahoo Answers.

This one includes product search blended into the middle of the organic result set.

Google SEO Services (BETA)

When Google acquired DoubleClick Larry Page wanted to keep the Performics division offering SEM & SEO services just to see what would happen. Other Google executives realized the absurd conflict of interest and potential anti trust issues, so they overrode ambitious Larry: “He wanted to see how those things work. He wanted to experiment.”

Webmasters have grown tired of Google’s duplicity as the search ecosystem shifts to pay to play, or go away.

@davidiwanow I understand the problem, just not the complaints. Google won. Find another oppty, or pay Google. Simple.— john andrews (@searchsleuth999) November 5, 2014

Google’s webmaster guidelines can be viewed as reasonable and consistent or as an anti-competitive tool. As Google eats the ecosystem, those thrown under the bus shift their perspective.

Scraping? AOG (unless we do it) Affiliate? Fucking scumbags mainly AOG (unless we get into the space) Thin content? AOG (unless we do it)— Rae Hoffman (@sugarrae) November 5, 2014

Within some sectors larger players can repeatedly get scrutiny for the same offense with essentially no response, whereas smaller players operating in that same market are slaughtered because they are small.

At this point, Google should just come out and be blunt, “any form of promotion that does not involve paying us is against our guidelines.”— Rae Hoffman (@sugarrae) November 5, 2014

Access to lawyers, politicians & media outlets = access to benefit of the doubt.

Lack those & BEST OF LUCK TO YOU ;)

And most of all, I’m tired of having to tell SMBs that Google gives zero fucks when it comes to them— Rae Hoffman (@sugarrae) November 5, 2014

Google’s page asking “Do you need an SEO?” uses terms like: scam, illicit and deceptive to help frame the broader market perception of SEO.

If ranking movements appear random & non-linear then it is hard to make sense of continued ongoing investment. The less stable Google makes the search ecosystem, the worse they make SEOs look, as…

- anytime a site ranks better, that anchors the baseline expectation of where rankings should be

- large rank swings create friction in managing client communications

- whenever search traffic falls drastically it creates real world impacts on margins, employment & inventory levels

Matt Cutts stated it is a waste of resources for him to be a personal lightning rod for criticism from black hat SEOs. When Matt mentioned he might not go back to his old role at Google some members of the SEO industry were glad. In response some other SEOs mentioned black hats have nobody to blame but themselves & it is their fault for automating things.

After all, it is not like Google arbitrarily shifts their guidelines overnight and drastically penalizes websites to a disproportionate degree ex-post-facto for the work of former employees, former contractors, mistaken/incorrect presumed intent, third party negative SEO efforts, etc.

Oh … wait … let me take that back.

Indeed Google DOES do that, which is where much of the negative sentiment Matt complained about comes from.

Recall when Google went after guest posts, a site which had a single useful guest post on it got a sitewide penalty.

Around that time it was noted Auction.com had thousands of search results for text which was in some of their guest posts.

Enjoying Aaron murdering http://t.co/UadnmwekM7 RT @aaronwall: “about 9,730 results” http://t.co/Sms5L2BFGY— Brian Provost (@brianprovost) April 9, 2014

About a month before the guest post crack down, Auction.com received a $50 million investment from Google Capital.

- Publish a single guest post on your site = Google engineers shoot & ask questions later.

- Publish a duplicated guest post on many websites, with Google investment = Google engineers see it as a safe, sound, measured, reasonable, effective, clean, whitehat strategy.

The point of highlighting that sort of disconnect was not to “out” someone, but rather to highlight the (il)legitimacy of the selective enforcement. After all, …

@mvandemar @brianprovost if anyone should have the capital needed to “do things the right way, as per G” it should be G & those G invests in— aaron wall (@aaronwall) April 9, 2014

But perhaps Google has decided to change their practices and have a more reasonable approach to the SEO industry.

An encouraging development on this front was when Auction.com was once again covered in Bloomberg. They not only benefited from leveraging Google’s data and money, but Google also offered them another assist:

Closely held Auction.com, which is valued at $1.2 billion, based on Google’s stake, also is working with the Internet company to develop mobile and Web applications and improve its search-engine optimization for marketing, Sharga said.

“In a capitalist system, [Larry Page] suggests, the elimination of inefficiency through technology has to be pursued to its logical conclusion.” ― Richard Waters

With that in mind, one can be certain Google didn’t “miss” the guest posts by Auction.com. Enforcement is selective, as always.

“The best way to control the opposition is to lead it ourselves.” ― Vladimir Lenin

Whether you turn left or right, the road leads to the same goal.

Use Verticals To Increase Reach

In the last post, we looked at how SEO has always been changing, but one thing remains constant – the quest for information.

Given people will always be on a quest for information, and given there is no shortage of information, but there is limited time, then there will always be a marketing imperative to get your information seen either ahead of the competition, or in places where the competition haven’t yet targeted.

Channels

My take on SEO is broad because I’m concerned with the marketing potential of the search process, rather than just the behaviour of the Google search engine. We know the term SEO stands for Search Engine Optimization. It’s never been particularly accurate, and less so now, because what most people are really talking about is not SEO, but GO.

Google Optimization.

Still, the term SEO has stuck. The search channel used to have many faces, including Alta Vista, Inktomi, Ask, Looksmart, MSN, Yahoo, Google and the rest, hence the label SEO. Now, it’s pretty much reduced down to one. Google. Okay, there’s BingHoo, but really, it’s Google, 24/7.

We used to optimize for multiple search engines because we had to be everywhere the visitor was, and the search engines had different demographics. There was a time when Google was the choice of the tech savvy web user. These days, “search” means “Google”. You and your grandmother use it.

But people don’t spend most of their time on Google.

Search Beyond Google

The techniques for SEO are widely discussed, dissected, debated, ridiculed, encouraged and we’ve heard all of them, many times over. And that’s just GO.

The audience we are trying to connect with, meanwhile, is on a quest for information. On their quest for information, they will use many channels.

So, who is Google’s biggest search competitor? Bing? Yahoo?

Eric Schmidt thinks it’s Amazon:

Many people think our main competition is Bing or Yahoo,” he said during a visit to a Native Instruments, software and hardware company in Berlin. “But, really, our biggest search competitor is Amazon. People don’t think of Amazon as search, but if you are looking for something to buy, you are more often than not looking for it on Amazon….Schmidt noted that people are looking for a different kind of answers on Amazon’s site through the slew of reviews and product pages, but it’s still about getting information

An important point. For the user, it’s all about “getting information”. In SEO, verticals are often overlooked.

Client Selection & Getting Seen In The Right Places

I’m going to digress a little….how do you select clients, or areas to target?

I like to start from the audience side of the equation. Who are the intended audience, what does that audience really need, and where, on the web, are they? I then determine if it’s possible/plausible to position well for this intended audience within a given budget.

There is much debate amongst SEOs about what happens inside the Google black box, but we all have access to Google’s actual output in the form of search results. To determine the level of competition, examine the search results. Go through the top ten or twenty results for a few relevant keywords and see which sites Google favors, and try to work out why.

Once you look through the results and analyze the competition, you’ll get a good feel for what Google likes to see in that specific sector. Are the search results heavy on long-form information? Mostly commercial entities? Are sites large and established? New and up and coming? Do the top sites promote visitor engagement? Who links to them and why? Is there a lot news mixed in? Does it favor recency? Are Google pulling results from industry verticals?

It’s important to do this analysis for each project, rather than rely on prescriptive methods. Why? Because Google treats sectors differently. What works for “travel” SEO may not work for “casino” SEO because Google may be running different algorithms.

Once you weed out the wild speculation about algorithms, SEO discussion can contain much truth. People convey their direct experience and will sometimes outline the steps they took to achieve a result. However, often specific techniques aren’t universally applicable due to Google treating topic areas differently. So spend a fair bit of time on competitive analysis. Look closely at the specific results set you’re targeting to discover what is really working for that sector, out in the wild.

It’s at this point where you’ll start to see cross-overs between search and content placement.

The Role Of Verticals

You could try and rank for term X, and you could feature on a site that is already ranked for X. Perhaps Google is showing a directory page or some industry publication. Can you appear on that directory page or write an article for this industry publication? What does it take to get linked to by any of these top ten or twenty sites?

Once search visitors find that industry vertical, what is their likely next step? Do they sign up for a regular email? Can you get placement on those emails? Can you get an article well placed in some evergreen section on their site? Can you advertise on their site? Figure out how visitors would engage with that site and try to insert yourself, with grace and dignity, into that conversation.

Users may by-pass Google altogether and go straight to verticals. If they like video then YouTube is the obvious answer. A few years ago when Google was pushing advertisers to run video ads they pitched YouTube as the #2 global search engine. What does it take to rank in YouTube in your chosen vertical? Create videos that will be found in YouTube search results, which may also appear on Google’s main search results.

With 200,000 videos uploaded per day, more than 600 years required to view all those videos, more than 100 million videos watched daily, and more than 300 million existing accounts, if you think YouTube might not be an effective distribution channel to reach prospective customers, think again.

There’s a branding parallel here too. If the field of SEO is too crowded, you can brand yourself as the expert in video SEO.

There’s also the ubiquitous Facebook.

Facebook, unlike the super-secret Google, has shared their algorithm for ranking content on Facebook and filtering what appears in the news feed. The algorithm consists of three components…..

If you’re selling stuff, then are you on Amazon? Many people go directly to Amazon to begin product searches, information gathering and comparisons. Are you well placed on Amazon? What does it take to be placed well on Amazon? What are people saying? What are their complaints? What do they like? What language do they use?

In 2009, nearly a quarter of shoppers started research for an online purchase on a search engine like Google and 18 percent started on Amazon, according to a Forrester Research study. By last year, almost a third started on Amazon and just 13 percent on a search engine. Product searches on Amazon have grown 73 percent over the last year while searches on Google Shopping have been flat, according to comScore

All fairly obvious, but may help you think about channels and verticals more, rather than just Google. The appropriate verticals and channels will be different for each market sector, of course. And they change over time as consumer tastes & behaviors change. At some point each of these were new: blogging, Friendster, MySpace, Digg, Facebook, YouTube, Twitter, LinkedIn, Instagram, Pinterest, Snapchat, etc.

This approach will also help us gain a deeper understanding of the audience and their needs – particularly the language people use, the questions they ask, and the types of things that interest them most – which can then be fed back into your search strategy. Emulate whatever works in these verticals. Look to create a unique, deep collection of insights about your chosen keyword area. This will in turn lead to strategic advantage, as your competition is unlikely to find such specific information pre-packaged.

This could also be characterised as “content marketing”, which it is, although I like to think of it all as “getting in front of the visitors quest for information”. Wherever the visitors are, that’s where you go, and then figure out how to position well in that space.

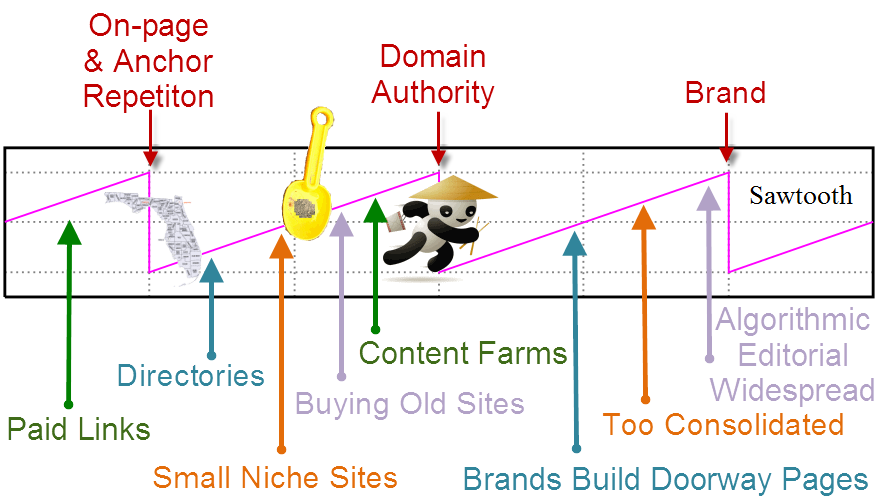

The Only Thing Certain In SEO Is Change

SEO is subject to frequent change, but in the last year or two, the changes feel both more frequent and significant than changes in the past. Florida hit in 2003. Since then, it’s like we get a Florida every six months.

Whenever Google updates the underlying landscape, the strategies need to change in order to deal with it. No fair warning. That’s not the game.

From Tweaks To Strategy

There used to be a time when SEOs followed a standard prescription. Many of us remember a piece of software called Web Position Gold.

Web Position Gold emerged when SEO could be reduced to a series of repeatable – largely technical – steps. Those steps involved adding keywords to a page, repeating those keywords in sufficient density, checking a few pieces of markup, then scoring against an “ideal” page. Upload to web. Add a few links. Wait a bit. Run a web ranking report. Viola! You’re an SEO. In all but the most competitive areas, this actually worked.

Seems rather quaint these days.

These days, you could do all of the above and get nowhere. Or you might get somewhere, but when so many more factors in play, they can’t be isolated to an individual page score. If the page is published on a site with sufficient authority, it will do well almost immediately. If it appears on a little known site, it may remain invisible for a long time.

Before Google floated in 2004, they released an investor statement signalling SEO – well, “index spammers” – as a business risk. If you ever want to know what Google really feels about people who “manipulate” their results, it’s right here:

We are susceptible to index spammers who could harm the integrity of our web search results.

There is an ongoing and increasing effort by “index spammers” to develop ways to manipulate our web search results. For example, because our web search technology ranks a web page’s relevance based in part on the importance of the web sites that link to it, people have attempted to link a group of web sites together to manipulate web search results. We take this problem very seriously because providing relevant information to users is critical to our success. If our efforts to combat these and other types of index spamming are unsuccessful, our reputation for delivering relevant information could be diminished. This could result in a decline in user traffic, which would damage our business.

SEO competes with the Adwords business model. So, Google “take very seriously” the activities of those who seek to figure out the algorithms, reverse engineer them, and create push-button tools like Web Position Gold. We’ve had Florida, and Panda, and Penguin, and Hummingbird, all aimed at making the search experience better for users, whilst having the pleasant side effect, as far as Google is concerned, of making life more difficult for SEOs.

I think the key part of Google’s statement was “delivering relevant information”.

From Technical Exercise To PR

SEO will always involve technical aspects. You get down into code level and mark it up. The SEO needs to be aware of development and design and how those activities can affect SEO. The SEO needs to know how web servers work, and how spiders can sometimes fail to deal with their quirks.

But in the years since Florida, marketing aspects have become more important. An SEO can perform the technical aspects of SEO and get nowhere. More recent algorithms, such as Panda and Penguin, gauge the behaviour of users, as Google tries to determine information quality of pages. Hummingbird attempts to discover the intent that lays behind keywords.

As a result, Keyword-based SEO is in the process of being killed off. Google withholds keyword referrer data and their various algorithms attempt to deliver pages based on a users intent and activity – both prior and present – in order to deliver relevant information. Understanding the user, having a unique and desirable offering, and a defensible market position is more important than any keyword markup. The keyword match, on which much SEO is based, is not an approach that is likely to endure.

The emphasis has also shifted away from the smaller operators and now appears to favour brands. This occurs not because brands are categorized as “brands”, but due to the side effects of significant PR activities. Bigger companies tend to run multiple advertising and PR campaigns, so produce signals Google finds favorable i.e. search volume on company name, semantic associations with products and services, frequent links from reputable media, and so on. This flows through into rank. And it also earns them leeway when operating in the gray area where manual penalties are handed out to smaller & weaker entities for the same activities.

Rankings

Apparently, Google killed off toolbar PageRank.

We will probably not going to be updating it [PageRank] going forward, at least in the Toolbar PageRank.

A few people noted it, but the news won’t raise many eyebrows as toolbar PR has long since become meaningless. Are there any SEOs altering what they do based on toolbar PR? It’s hard to imagine why. The reality is that an external PR value might indicate an approximate popularity level, but this isn’t an indicator of the subsequent ranking a link from such a page will deliver. There are too many other factors involved. If Google are still using an internal PR metric, it’s likely to be a significantly more complicated beast than was revealed in 1997.

A PageRank score is a proxy for authority. I’m quite sure Google kept it going as an inside joke.

A much more useful proxy for authority are the top ten pages in any niche. Google has determined all well-ranking pages have sufficient authority, and no matter what the toolbar, or any other third-party proxy, says, it’s Google’s output that counts. A link from any one of the top ten pages will likely confer a useful degree of authority, all else being equal. It’s good marketing practice to be linked from, and engage with, known leaders in your niche. That’s PR, as in public relations thinking, vs PR (Page rank), technical thinking.

The next to go will likely be keyword-driven SEO. Withholding keyword referral data was the beginning of the end. Hummingbird is hammering in the nails. Keywords are still great for research purposes – to determine if there’s an audience and what the size of that audience may be – but SEO is increasingly driven by semantic associations and site categorizations. It’s not enough to feature a keyword on a page. A page, and site, needs to be about that keyword, and keywords like it, and be externally recognized as such. In the majority of cases, a page needs to match user intent, rather than just a search term. There are many exceptions, of course, but given what we know about Hummingbird, this appears to be the trend.

People will still look at rank, and lust after prize keywords, but really, rankings have been a distraction all along. Reach and specificity is more important i.e. where’s the most value coming from? The more specific the keyword, typically the lower the bounce rate and the higher the conversion rate. The lower the bounce-rate, and higher the conversion rate, the more positive signals the site will generate, which will flow back into a ranking algorithm increasing being tuned for engagement. Ranking for any keyword that isn’t delivering business value makes no sense.

There are always exceptions. But that’s the trend. Google are looking for pages that match user intent, not just pages that match a keyword term. In terms of reach, you want to be everywhere your customers are.

Search Is The Same, But Different

To adapt to change, SEOs should think about search in the widest possible terms. A search is quest for information. It may be an active, self-directed search, in the form of a search engine query. Or a more passive search, delivered via social media subscriptions and the act of following. How will all these activities feed into your search strategy?

Sure, it’s not a traditional definition of SEO, as I’m not limiting it to search engines. Rather, my point is about the wider quest for information. People want to find things. Eric Schmidt recently claimed Amazon is Google’s biggest competitor in search. The mechanisms and channels may change, but the quest remains the same. Take, for example, the changing strategy of BuzzFeed:

Soon after Peretti had turned his attention to BuzzFeed full-time in 2011, after leaving the Huffington Post, BuzzFeed took a hit from Google. The site had been trying to focus on building traffic from both social media marketing and through SEO. But the SEO traffic — the free traffic driven from Google’s search results — dried up.

Reach is important. Topicality is important. Freshness, in most cases, is important. Engagement is important. Finding information is not just about a technical match of a keyword, it’s about an intellectual match of an idea. BuzzFeed didn’t take their eye off the ball. They know helping users find information is the point of the game they are in.

And the internet has only just begun.

In terms of the internet, nothing has happened yet. The internet is still at the beginning of its beginning. If we could climb into a time machine and journey 30 years into the future, and from that vantage look back to today, we’d realize that most of the greatest products running the lives of citizens in 2044 were not invented until after 2014. People in the future will look at their holodecks, and wearable virtual reality contact lenses, and downloadable avatars, and AI interfaces, and say, oh, you didn’t really have the internet (or whatever they’ll call it) back then.

In 30 years time, people will still be on the exact same quest for information. The point of SEO has always been to get your information in front of visitors, and that’s why SEO will endure. SEO was always a bit of a silly name, and it often distracts people from the point, which is to get your stuff seen ahead of the rest.

Some SEOs have given up in despair because it’s not like the old days. It’s becoming more expensive to do effective SEO, and the reward may not be there, especially for smaller sites. However, this might be to miss the point, somewhat.

The audience is still there. Their needs haven’t changed. They still want to find stuff. If SEO is all about helping users find stuff, then that’s the important thing. Remember the “why”. Adapt the “how”

In the next few articles, we’ll look at the specifics of how.

Measuring SEO Performance After “Not Provided”

In recent years, the biggest change to the search landscape happened when Google chose to withhold keyword data from webmasters. At SEOBook, Aaron noticed and wrote about the change, as evermore keyword data disappeared.

The motivation to withold this data, according to Google, was privacy concerns:

SSL encryption on the web has been growing by leaps and bounds. As part of our commitment to provide a more secure online experience, today we announced that SSL Search on https://www.google.com will become the default experience for signed in users on google.com.

At first, Google suggested it would only affect a single-digit percentage of search referral data:

Google software engineer Matt Cutts, who’s been involved with the privacy changes, wouldn’t give an exact figure but told me he estimated even at full roll-out, this would still be in the single-digit percentages of all Google searchers on Google.com

…which didn’t turn out to be the case. It now affects almost all keyword referral data from Google.

Was it all about privacy? Another rocket over the SEO bows? Bit of both? Probably. In any case, the search landscape was irrevocably changed. Instead of being shown the keyword term the searcher had used to find a page, webmasters were given the less than helpful “not provided”. This change rocked SEO. The SEO world, up until that point, had been built on keywords. SEOs choose a keyword. They rank for the keyword. They track click-thrus against this keyword. This is how many SEOs proved their worth to clients.

These days, very little keyword data is available from Google. There certainly isn’t enough to keyword data to use as a primary form of measurement.

Rethinking Measurement

This change forced a rethink about measurement, and SEO in general. Whilst there is still some keyword data available from the likes of Webmaster Tools & the AdWords paid versus organic report, keyword-based SEO tracking approaches are unlikely to align with Google’s future plans. As we saw with the Hummingbird algorithm, Google is moving towards searcher-intent based search, as opposed to keyword-matched results.

Hummingbird should better focus on the meaning behind the words. It may better understand the actual location of your home, if you’ve shared that with Google. It might understand that “place” means you want a brick-and-mortar store. It might get that “iPhone 5s” is a particular type of electronic device carried by certain stores. Knowing all these meanings may help Google go beyond just finding pages with matching words

The search bar is still keyword based, but Google is also trying to figure out what user intent lays behind the keyword. To do this, they’re relying on context data. For example, they look at what previous searches has the user made, their location, they are breaking down the query itself, and so on, all of which can change the search results the user sees.

When SEO started, it was in an environment where the keyword the user typed into a search bar was exact matching that with a keyword that appears on a page. This is what relevance meant. SEO continued with this model, but it’s fast becoming redundant, because Google is increasingly relying on context in order to determine searcher intent & while filtering many results which were too aligned with the old strategy. Much SEO has shifted from keywords to wider digital marketing considerations, such as what the visitor does next, as a result.

We’ve Still Got Great Data

Okay, if SEO’s don’t have keywords, what can they use?

If we step back a bit, what we’re really trying to do with measurement is demonstrate value. Value of search vs other channels, and value of specific search campaigns. Did our search campaigns meet our marketing goals and thus provide value?

Do we have enough data to demonstrate value? Yes, we do. Here are a few ideas SEOs have devised to look at the organic search data they are getting, and they use it to demonstrate value.

1. Organic Search VS Other Activity

If our organic search tracking well when compared with other digital marketing channels, such as social or email? About the same? Falling?

In many ways, the withholding of keyword data can be a blessing, especially to those SEOs who have a few ranking-obsessed clients. A ranking, in itself is worthless, especially if it’s generating no traffic.

Instead, if we look at the total amount of organic traffic, and see that it is rising, then we shouldn’t really care too much about what keywords it is coming from. We can also track organic searches across device, such as desktop vs mobile, and get some insight into how best to optimize those channels for search as a whole, rather than by keyword. It’s important that the traffic came from organic search, rather than from other campaigns. It’s important that the visitors saw your site. And it’s important what that traffic does next.

2. Bounce Rate

If a visitor comes in, doesn’t like what is on offer, and clicks back, then that won’t help rankings. Google have been a little oblique on this point, saying they aren’t measuring bounce rate, but I suspect it’s a little more nuanced, in practice. If people are failing to engage, then anecdotal evidence suggests this does affect rankings.

Look at the behavioral metrics in GA; if your content has 50% of people spending less than 10 seconds, that may be a problem or that may be normal. The key is to look below that top graph and see if you have a bell curve or if the next largest segment is the 11-30 second crowd.

Either way, we must encourage visitor engagement. Even small improvements in terms of engagement can mean big changes in the bottom line. Getting visitors to a site was only ever the first step in a long chain. It’s what they do next that really makes or breaks a web business, unless the entire goal was that the visitor should only view the landing page. Few sites, these days, would get much return on non-engagement.

PPCers are naturally obsessed with this metric, because each click is costing them money, but when you think about it, it’s costing SEOs money, too. Clicks are getting harder and harder to get, and each click does have a cost associated with it i.e. the total cost of the SEO campaign divided by the number of clicks, so each click needs to be treated as a cost.

3. Landing Pages

We can still do landing page analysis. We can see the pages where visitors are entering the website. We can also see which pages are most popular, and we can tell from the topic of the page what type of keywords people are using to find it.

We could add more related keyword to these pages and see how they do, or create more pages on similar themes, using different keyword terms, and then monitor the response. Similarly, we can look at poorly performing pages and make the assumption these are not ranking against intended keywords, and mark these for improvement or deletion.

We can see how old pages vs new pages are performing in organic search. How quickly do new pages get traffic?

We’re still getting a lot of actionable data, and still not one keyword in sight.

4. Visitor And Customer Acquisition Value

We can still calculate the value to the business of an organic visitor.

We can also look at what step in the process are organic visitors converting. Early? Late? Why? Is there some content on the site that is leading them to convert better than other content? We can still determine if organic search provided a last click-conversion, or a conversion as the result of a mix of channels, where organic played a part. We can do all of this from aggregated organic search data, with no need to look at keywords.

5. Contrast With PPC

We can contrast Adwords data back against organic search. Trends we see in PPC might also be working in organic search.

For AdWords our life is made infinitesimally easier because by linking your AdWords account to your Analytics account rich AdWords data shows up automagically allowing you to have an end-to-end view of campaign performance.

Even PPC-ers are having to change their game around keywords:

The silver lining in all this? With voice an mobile search, you’ll likely catch those conversions that you hadn’t before. While you may think that you have everything figured out and that your campaigns are optimal, this matching will force you into deeper dives that hopefully uncover profitable PPC pockets.

6. Benchmark Against Everything

In the above section I highlighted comparing organic search to AdWords performance, but you can benchmark against almost any form of data.

Is 90% of your keyword data (not provided)? Then you can look at the 10% which is provided to estimate performance on the other 90% of the traffic. If you get 1,000 monthly keyword visits for [widgets], then as a rough rule of thumb you might get roughly 9,000 monthly visits for that same keyword shown as (not provided).

Has your search traffic gone up or down over the past few years? Are there seasonal patterns that drive user behavior? How important is the mobile shift in your market? What landing pages have performed the best over time and which have fallen hardest?

How is your site’s aggregate keyword ranking profile compared to top competitors? Even if you don’t have all the individual keyword referral data from search engines, seeing the aggregate footprints, and how they change over time, indicates who is doing better and who gaining exposure vs losing it.

Numerous competitive research tools like SEM Rush, SpyFu & SearchMetrics provide access to that type of data.

You can also go further with other competitive research tools which look beyond the search channel. Is most of your traffic driven from organic search? Do your competitors do more with other channels? A number of sites like Compete.com and Alexa have provided estimates for this sort of data. Another newer entrant into this market is SimilarWeb.

And, finally, rank checking still has some value. While rank tracking may seem futile in the age of search personalization and Hummingbird, it can still help you isolate performance issues during algorithm updates. There are a wide variety of options from browser plugins to desktop software to hosted solutions.

By now, I hope I’ve convinced you that specific keyword data isn’t necessary and, in some case, may have only served to distract some SEOs from seeing other valuable marketing metrics, such as what happens after the click and where do they go next.

So long as the organic search traffic is doing what we want it to, we know which pages it is coming in on, and can track what it does next, there is plenty of data there to keep us busy. Lack of keyword data is a pain, but in response, many SEOs are optimizing for a lot more than keywords, and focusing more on broader marketing concerns.

Further Reading & Sources:

Loah Qwality Add Werds Clix Four U

Google recently announced they were doing away with exact match AdWords ad targeting this September. They will force all match types to have close variant keyword matching enabled. This means you get misspelled searches, plural versus singular overlap, and an undoing of your tight organization.

In some cases the user intent is different between singular and plural versions of a keyword. A singular version search might be looking to buy a single widget, whereas a plural search might be a user wanting to compare different options in the marketplace. In some cases people are looking for different product classes depending on word form:

For example, if you sell spectacles, the difference between users searching on ‘glass’ vs. ‘glasses’ might mean you are getting users seeing your ad interested in a building material, rather than an aid to reading.

Where segmenting improved the user experience, boosted conversion rates, made management easier, and improved margins – those benefits are now off the table.

CPC isn’t the primary issue. Profit margins are what matter. Once you lose the ability to segment you lose the ability to manage your margins. And this auctioneer is known to bid in their own auctions, have random large price spikes, and not give refunds when they are wrong.

An offline analogy for this loss of segmentation … you go to a gas station to get a bottle of water. After grabbing your water and handing the cashier a $20, they give you $3.27 back along with a six pack you didn’t want and didn’t ask for.

Why does a person misspell a keyword? Some common reasons include:

- they are new to the market & don’t know it well

- they are distracted

- they are using a mobile device or something which makes it hard to input their search query (and those same input issues make it harder to perform other conversion-oriented actions)

- their primary language is a different language

- they are looking for something else

In any of those cases, the typical average value of the expressed intent is usually going to be less than a person who correctly spelled the keyword.

Even if spelling errors were intentional and cultural, the ability to segment that and cater the landing page to match disappears. Or if the spelling error was a cue to send people to an introductory page earlier in the conversion funnel, that option is no more.

In many accounts the loss of the granular control won’t cause too big of a difference. But some advertiser accounts in competitive markets will become less profitable and more expensive to manage:

No one who’s in the know has more than about 5-10 total keywords in any one adgroup because they’re using broad match modified, which eliminated the need for “excessive keyword lists” a long time ago. Now you’re going to have to spend your time creating excessive negative keyword lists with possibly millions upon millions of variations so you can still show up for exactly what you want and nothing else.

You might not know which end of the spectrum your account is on until disaster strikes:

I added negatives to my list for 3 months before finally giving up opting out of close variants. What they viewed as a close variant was not even in the ballpark of what I sell. There have been petitions before that have gotten Google to reverse bad decisions in the past. We need to make that happen again.

Brad Geddes has held many AdWords seminars for Google. What does he think of this news?

In this particular account, close variations have much lower conversion rates and much higher CPAs than their actual match type.

…

Variation match isn’t always bad, there are times it can be good to use variation match. However, there was choice.

…

Loss of control is never good. Mobile control was lost with Enhanced Campaigns, and now you’re losing control over your match types. This will further erode your ability to control costs and conversions within AdWords.

A monopoly restricting choice to enhance their own bottom line. It isn’t the first time they’ve done that, and it won’t be the last.

Have an enhanced weekend!

Understanding The Google Penguin Algorithm

Whenever Google does a major algorithm update we all rush off to our data to see what changed in terms of rankings, search traffic, and then look for the trends to try to figure out what changed.

The two people I chat most with during periods of big a…

Guide To Optimizing Client Sites 2014

For those new to optimizing clients sites, or those seeking a refresher, we thought we’d put together a guide to step you through it, along with some selected deeper reading on each topic area.

Every SEO has different ways of doing things, but we’ll cover the aspects that you’ll find common to most client projects.

Few Rules

The best rule I know about SEO is there are few absolutes in SEO. Google is a black box, so complete data sets will never be available to you. Therefore, it can be difficult to pin down cause and effect, so there will always be a lot of experimentation and guesswork involved. If it works, keep doing it. If it doesn’t, try something else until it does.

Many opportunities tend to present themselves in ways not covered by “the rules”. Many opportunities will be unique and specific to the client and market sector you happen to be working with, so it’s a good idea to remain flexible and alert to new relationship and networking opportunities. SEO exists on the back of relationships between sites (links) and the ability to get your content remarked upon (networking).

When you work on a client site, you will most likely be dealing with a site that is already established, so it’s likely to have legacy issues. The other main challenge you’ll face is that you’re unlikely to have full control over the site, like you would if it were your own. You’ll need to convince other people of the merit of your ideas before you can implement them. Some of these people will be open to them, some will not, and some can be rather obstructive. So, the more solid data and sound business reasoning you provide, the better chance you have of convincing people.

The most important aspect of doing SEO for clients is not blinding them with technical alchemy, but helping them see how SEO provides genuine business value.

1. Strategy

The first step in optimizing a client site is to create a high-level strategy.

“Study the past if you would define the future.” – Confucious

You’re in discovery mode. Seek to understand everything you can about the clients business and their current position in the market. What is their history? Where are they now and where do they want to be? Interview your client. They know their business better than you do and they will likely be delighted when you take a deep interest in them.

- What are they good at?

- What are their top products or services?

- What is the full range of their products or services?

- Are they weak in any areas, especially against competitors?

- Who are their competitors?

- Who are their partners?

- Is their market sector changing? If so, how? Can they think of ways in which this presents opportunities for them?

- What keyword areas have worked well for them in the past? Performed poorly?

- What are their aims? More traffic? More conversions? More reach? What would success look like to them?

- Do they have other online advertising campaigns running? If so, what areas are these targeting? Can they be aligned with SEO?

- Do they have offline presence and advertising campaigns? Again, what areas are these targeting and can they be aligned with SEO?

Some SEO consultants see their task being to gain more rankings under an ever-growing list of keywords. Ranking for more keywords, or getting more traffic, may not result in measurable business returns as it depends on the business and the marketing goals. Some businesses will benefit from honing in on specific opportunities that are already being targeted, others will seek wider reach. This is why it’s important to understand the business goals and market sector, then design the SEO campaign to support the goals and the environment.

This type of analysis also provides you with leverage when it comes to discussing specific rankings and competitor rankings. The SEO can’t be expected to wave a magic wand and place a client top of a category in which they enjoy no competitive advantage. Even if the SEO did manage to achieve this feat, the client may not see much in the way of return as it’s easy for visitors to click other listings and compare offers.

Understand all you can about their market niche. Look for areas of opportunity, such as changing demand not being met by your client or competitors. Put yourself in their customers shoes. Try and find customers and interview them. Listen to the language of customers. Go to places where their customers hang out online. From the customers language and needs, combined with the knowledge gleaned from interviewing the client, you can determine effective keywords and themes.

Document. Get it down in writing. The strategy will change over time, but you’ll have a baseline point of agreement outlining where the site is at now, and where you intend to take it. Getting buy-in early smooths the way for later on. Ensure that whatever strategy you adopt, it adds real, measurable value by being aligned with, and serving, the business goals. It’s on this basis the client will judge you, and maintain or expand your services in future.

Further reading:

– 4 Principles Of Marketing Strategy In The Digital Age

– Product Positioning In Five Easy Steps [pdf]

– Technology Marketers Need To Document Their Marketing Strategy

2. Site Audit

Sites can be poorly organized, have various technical issues, and missed keyword opportunities.

We need to quantify what is already there, and what’s not there.

- Use a site crawler, such as Xenu Link Sleuth, Screaming Frog or other tools that will give you a list of URLs, title information, link information and other data.

- Make a list of all broken links.

- Make a list of all orphaned pages

- Make a list of all pages without titles

- Make a list of all pages with duplicate titles

- Make a list of pages with weak keyword alignment

- Crawl robots txt and hand-check. It’s amazing how easy it is to disrupt crawling with a robots.txt file

Broken links are a low-quality signal. It’s debatable if they are a low quality signal to Google, but certainly to users. If the client doesn’t have one already, implement a system whereby broken links are checked on a regular basis. Orphaned pages are pages that have no links pointing to them. Those pages may be redundant, in which case they should be removed, or you need to point inbound links at them, so they can be crawled and have more chance of gaining rank. Page titles should be unique, aligned with keyword terms, and made attractive in order to gain a click. A link is more attractive if it speaks to a customer need. Carefully check robots.txt to ensure it’s not blocking areas of the site that need to be crawled.

As part of the initial site audit, it might make sense to include the site in Google Webmaster Tools to see if it has any existing issues there and to look up its historical performance on competitive research tools to see if the site has seen sharp traffic declines. If they’ve had sharp ranking and traffic declines, pull up that time period in their web analytics to isolate the date at which it happened, then look up what penalties might be associated with that date.

Further Reading:

– Broken Links, Pages, Images Hurt SEO