The Webmaster Academy goes international

Webmaster level: All

Since we launched the Webmaster Academy in English back in May 2012, its educational content has been viewed well over 1 million times.

The Webmaster Academy was built to guide webmasters in creating great sites that perform well in Google search results. It is an ideal guide for beginner webmasters but also a recommended read for experienced users who wish to learn more about advanced topics.

To support webmasters across the globe, we’re happy to announce that we’re launching the Webmaster Academy in 20 languages. So whether you speak Japanese or Italian, we hope we can help you to make even better websites! You can easily access it through Webmaster Central.

We’d love to read your comments here and invite you to join the discussion in the help forums.

Posted by Giacomo Gnecchi Ruscone, Search Quality

A new opt-out tool

Webmasters have several ways to keep their sites’ content out of Google’s search results. Today, as promised, we’re providing a way for websites to opt out of having their content that Google has crawled appear on Google Shopping, Advisor, Flights, Ho…

Easier management of website verifications

Webmaster level: All

To help webmasters manage the verified owners for their websites in Webmaster Tools, we’ve recently introduced three new features:

-

Verification details view: You can now see the methods used to verify an owner for your site. In the Manage owners page for your site, you can now find the new Verification details link. This screenshot shows the verification details of a user who is verified using both an HTML file uploaded to the site and a meta tag:

Where appropriate, the Verification details will have links to the correct URL on your site where the verification can be found to help you find it faster.

-

Requiring the verification method be removed from the site before unverifying an owner: You now need to remove the verification method from your site before unverifying an owner from Webmaster Tools. Webmaster Tools now checks the method that the owner used to verify ownership of the site, and will show an error message if the verification is still found. For example, this is the error message shown when an unverification was attempted while the DNS CNAME verification method was still found on the DNS records of the domain:

-

Shorter CNAME verification string: We’ve slightly modified the CNAME verification string to make it shorter to support a larger number of DNS providers. Some systems limit the number of characters that can be used in DNS records, which meant that some users were not able to use the CNAME verification method. We’ve now made the CNAME verification method have a fewer number of characters. Existing CNAME verifications will continue to be valid.

We hope this changes make it easier for you to use Webmaster Tools. As always, please post in our Verification forum if you have any questions or feedback.

Posted by Pierre Far, Webmaster Trends Analyst

Making search-friendly mobile websites — now in 11 more languages

Webmaster level: Intermediate

As more and more users worldwide with mobile devices access the Internet, it’s fantastic to see so many websites making their content accessible and useful for those devices. To help webmasters optimize their sites we launched our recommendations for smartphones, feature-phones, tablets, and Googlebot-friendly sites in June 2012.

We’re happy to announce that those recommendations are now also available in Arabic, Brazilian Portuguese, Dutch, French, German, Italian, Japanese, Polish, Russian, Simplified Chinese, and Spanish. US-based webmasters are welcome to read the UK-English version.

We welcome you to go through our recommendations, pick the configuration that you feel will work best with your website, and get ready to jump on the mobile bandwagon!

Thanks to the fantastic webmaster-outreach team in Dublin, Tokyo and Beijing for making this possible!

Posted (but not translated) by John Mueller, Webmaster Trends Analyst, Zürich Switzerland

We created a first steps cheat sheet for friends & family

Everyone knows someone who just set up their first blog on Blogger, installed WordPress for the first time or maybe who had a web site for some time but never gave search much thought. We came up with a first steps cheat sheet for just these folks. It’s a short how-to list with basic tips on search engine-friendly design, that can help Google and others better understand the content and increase your site’s visibility. We made sure it’s available in thirteen languages. Please feel free to read it, print it, share it, copy and distribute it!

We hope this content will help those who are just about to start their webmaster adventure or have so far not paid too much attention to search engine-friendly design. Over time as you gain experience you may want to have a look at our more advanced Google SEO Starter Guide. As always we welcome all webmasters and site owners, new and experienced to join discussions on our Google Webmaster Help Forum.

New first stop for hacked site recovery

Webmaster Level: All

We certainly hope you never have to use our new Help for hacked sites informational series. It’s a dozen articles and over an hour of videos dedicated to helping webmasters in the unfortunate event that their site is compromised.

Overview: How and why sites are hacked

If you have further interest in why cybercriminals hack sites for spammy purposes, see Tiffany Oberoi’s explanation in Step 5: Assess the damage (hacked with spam).

Tiffany Oberoi, a Webspam engineer, shares more information about sites hacked with spam

And if you’re curious about malware, Lucas Ballard from our Safe Browsing team, explains more about the topic in Step 5: Assess the damage (hacked with malware).

Lucas Ballard, a Safe Browsing engineer, and I pretend to have a totally natural conversation about malware

While we attempt to outline the necessary steps in recovery, each task remains fairly difficult for site owners unless they have advanced knowledge of system administrator commands and experience with source code. For helping fellow webmasters through the difficult recovery time, we’d like to thank the steady members in Webmaster Forum. Specifically, in the subforum Malware and hacked sites, we’d be remiss not to mention the amazing contributions of Redleg and Denis Sinegubko.

How to avoid ever needing Help for hacked sites

Just as you focus on making a site that’s good for users and search-engine friendly, keeping your site secure — for you and your visitors — is also paramount. When site owners fail to keep their site secure, hackers may exploit the vulnerability. If a hacker exploits a vulnerability, then you might need Help for hacked sites. So, to potentially avoid this scenario:

- Be vigilant about keeping software updated

- Understand the security practices of all applications, plugins, third-party software, etc., before you install them on your server. A security vulnerability in one software application can affect the safety of your entire site

- Remove unnecessary or unused software

- Enforce creation of strong passwords

- Keep all devices used to log in to your servers secure (updated operating system and browser)

- Make regular, automated backups of your site

Help for hacked sites can be found at www.google.com/webmasters/hacked. We look forward to not seeing you there!

Written by Maile Ohye, Developer Programs Tech Lead

A reminder about selling links that pass PageRank

Webmaster level: all

Google has said for years that selling links that pass PageRank violates our quality guidelines. We continue to reiterate that guidance periodically to help remind site owners and webmasters of that policy.

Please be wary if some…

Make the most of Search Queries in Webmaster Tools

Level: Beginner to Intermediate

If you’re intrigued by the Search Queries feature in Webmaster Tools but aren’t sure how to make it actionable, we have a video that we hope will help!

Maile shares her approach to Search Queries in Webmaster Tools

This video explains the vocabulary of Search Queries, such as:

- Impressions

- Average position (only the top-ranking URL for the user’s query is factored in our calculation)

- Click

- CTR

The video also reviews an approach to investigating Top queries and Top pages:

- Prepare by understanding your website’s goals and your target audience (then using Search Queries “filters” to support your knowledge)

- Sort by clicks in Top queries to understand the top queries bringing searchers to your site (for the given time period)

- Sort by CTR to notice any missed opportunities

- Categorize queries into logical buckets that simplify tracking your progress and staying in touch with users’ needs

- Sort Top pages by clicks to find the URLs on your site most visited by searchers (for the given time period)

- Sort Top pages by impressions to find valuable pages that can be used to help feature your related, high-quality, but lower-ranking pages

After you’ve watched the video and applied the knowledge of your site with the findings from Search Queries, you’ll likely have several improvement ideas to help searchers find your site. If you’re up for it, let us know in the comments what Search Queries information you find useful (and why!), and of course, as always, feel free to share any tips or feedback.

Written by Maile Ohye, Developer Programs Tech Lead

A faster image search

Webmaster level: all

People looking for images on Google often want to browse through many images, looking both at the images and their metadata (detailed information about the images). Based on feedback from both users and webmasters, we redesigned Google Images to provide a better search experience. In the next few days, you’ll see image results displayed in an inline panel so it’s faster, more beautiful, and more reliable. You will be able to quickly flip through a set of images by using the keyboard. If you want to go back to browsing other search results, just scroll down and pick up right where you left off.

Here’s what it means for webmasters:

- We now display detailed information about the image (the metadata) right underneath the image in the search results, instead of redirecting users to a separate landing page.

- We’re featuring some key information much more prominently next to the image: the title of the page hosting the image, the domain name it comes from, and the image size.

- The domain name is now clickable, and we also added a new button to visit the page the image is hosted on. This means that there are now four clickable targets to the source page instead of just two. In our tests, we’ve seen a net increase in the average click-through rate to the hosting website.

- The source page will no longer load up in an iframe in the background of the image detail view. This speeds up the experience for users, reduces the load on the source website’s servers, and improves the accuracy of webmaster metrics such as pageviews. As usual, image search query data is available in Top Search Queries in Webmaster Tools.

As always, please ask on our Webmaster Help forum if you have questions.

Posted by Hongyi Li, Associate Product Manager

Webmaster Tools verification strategies

Webmaster level: all

Verifying ownership of your website is the first step towards using Google Webmaster Tools. To help you keep verification simple & reduce its maintenance to a minimum, especially when you have multiple people using Webmaster Tools, we’ve put together a small list of tips & tricks that we’d like to share with you:

- The method that you choose for verification is up to you, and may depend on your CMS & hosting providers. If you want to be sure that changes on your side don’t result in an accidental loss of the verification status, you may even want to consider using two methods in parallel.

- Back in 2009, we updated the format of the verification meta tag and file. If you’re still using the old format, we recommend moving to the newer version. The newer meta tag is called “google-site-verification, and the newer file format contains just one line with the file name. While we’re currently supporting ye olde format, using the newer one ensures that you’re good to go in the future.

- When removing users’ access in Webmaster Tools, remember to remove any active associated verification tokens (file, meta tag, etc.). Leaving them on your server means that these users would be able to gain access again at any time. You can view the site owners list in Webmaster Tools under Configuration / Users.

- If multiple people need to access the site, we recommend using the “add users” functionality in Webmaster Tools. This makes it easier for you to maintain the access control list without having to modify files or settings on your servers.

- Also, if multiple people from your organization need to use Webmaster Tools, it can be a good policy to only allow users with email addresses from your domain. By doing that, you can verify at a glance that only users from your company have access. Additionally, when employees leave, access to Webmaster Tools is automatically taken care of when that account is disabled.

- Consider using “restricted” (read-only) access where possible. Settings generally don’t need to be changed on a daily basis, and when they do need to be changed, it can be easier to document them if they have to go through a central account.

We hope these tips help you to simplify the situation around verification of your website in Webmaster Tools. For more questions about verification, feel free to drop by our Webmaster Help Forums.

Posted by John Mueller, Webmaster Trends Analyst, Zurich

Introducing Data Highlighter for event data

Webmaster Level: All

Update 19 February 2013: Data Highlighter for events structured markup is available in all languages in Webmaster Tools.

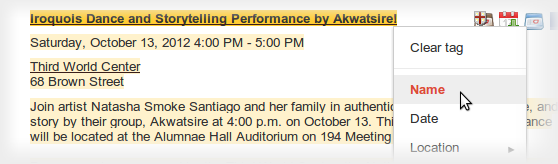

At Google we’re making more and more use of structured data to provide enhanced search results, such as rich snippets and event calendars, that help users find your content. Until now, marking up your site’s HTML code has been the only way to indicate structured data to Google. However, we recognize that markup may be hard for some websites to deploy.

Today, we’re offering webmasters a simpler alternative: Data Highlighter. At initial launch, it’s available in English only and for structured data about events, such as concerts, sporting events, exhibitions, shows, and festivals. We’ll make Data Highlighter available for more languages and data types in the months ahead. Update 19 February 2013: Data Highlighter for events structured markup is available in all languages in Webmaster Tools.

Data Highlighter is a point-and-click tool that can be used by anyone authorized for your site in Google Webmaster Tools. No changes to HTML code are required. Instead, you just use your mouse to highlight and “tag” each key piece of data on a typical event page of your website:

If your page lists multiple events in a consistent format, Data Highlighter will “learn” that format as you apply tags, and help speed your work by automatically suggesting additional tags. Likewise, if you have many pages of events in a consistent format, Data Highlighter will walk you through a process of tagging a few example pages so it can learn about their format variations. Usually, 5 or 10 manually tagged pages are enough for our sophisticated machine-learning algorithms to understand the other, similar pages on your site.

When you’re done, you can review a sample of all the event data that Data Highlighter now understands. If it’s correct, click “Publish.”

From then on, as Google crawls your site, it will recognize your latest event listings and make them eligible for enhanced search results. You can inspect the crawled data on the Structured Data Dashboard, and unpublish at any time if you’re not happy with the results.

Here’s a short video explaining how the process works:

To get started with Data Highlighter, visit Webmaster Tools, select your site, click the “Optimization” link in the left sidebar, and click “Data Highlighter”.

If you have any questions, please read our Help Center article or ask us in the Webmaster Help Forum. Happy Highlighting!

Posted by Justin Boyan, Product Manager

Helping Webmasters with Hacked Sites

Webmaster Level : Intermediate/Advanced

Having your website hacked can be a frustrating experience and we want to do everything we can to help webmasters get their sites cleaned up and prevent compromises from happening again. With this post we wanted to outline two common types of attacks as well as provide clean-up steps and additional resources that webmasters may find helpful.

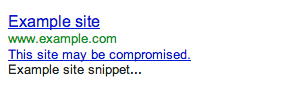

To best serve our users it’s important that the pages that we link to in our search results are safe to visit. Unfortunately, malicious third-parties may take advantage of legitimate webmasters by hacking their sites to manipulate search engine results or distribute malicious content and spam. We will alert users and webmasters alike by labeling sites we’ve detected as hacked by displaying a “This site may be compromised” warning in our search results:

We want to give webmasters the necessary information to help them clean up their sites as quickly as possible. If you’ve verified your site in Webmaster Tools we’ll also send you a message when we’ve identified your site has been hacked, and when possible give you example URLs.

Occasionally, your site may become compromised to facilitate the distribution of malware. When we recognize that, we’ll identify the site in our search results with a label of “This site may harm your computer” and browsers such as Chrome may display a warning when users attempt to visit. In some cases, we may share more specific information in the Malware section of Webmaster Tools. We also have specific tips for preventing and removing malware from your site in our Help Center.

Two common ways malicious third-parties may compromise your site are the following:

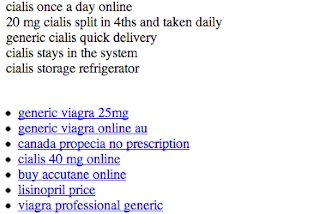

Injected Content

Hackers may attempt to influence search engines by injecting links leading to sites they own. These links are often hidden to make it difficult for a webmaster to detect this has occurred. The site may also be compromised in such a way that the content is only displayed when the site is visited by search engine crawlers.

Example of injected pharmaceutical content

If we’re able to detect this, we’ll send a message to your Webmaster Tools account with useful details. If you suspect your site has been compromised in this way, you can check the content your site returns to Google by using the Fetch as Google tool. A few good places to look for the source of such behavior of such a compromise are .php files, template files and CMS plugins.

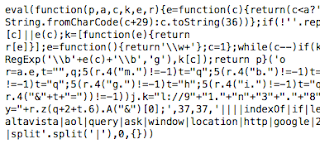

Redirecting Users

Hackers might also try to redirect users to spammy or malicious sites. They may do it to all users or target specific users, such as those coming from search engines or those on mobile devices. If you’re able to access your site when visiting it directly but you experience unexpected redirects when coming from a search engine, it’s very likely your site has been compromised in this manner.

One of the ways hackers accomplish this is by modifying server configuration files (such as Apache’s .htaccess) to serve different content to different users, so it’s a good idea to check your server configuration files for any such modifications.

This malicious behavior can also be accomplished by injecting JavaScript into the source code of your site. The JavaScript may be designed to hide its purpose so it may help to look for terms like “eval”, “decode”, and “escape”.

Cleanup and Prevention

If your site has been compromised, it’s important to not only clean up the changes made to your site but to also address the vulnerability that allowed the compromise to occur. We have instructions for cleaning your site and preventing compromises while your hosting provider and our Malware and Hacked sites forum are great resources if you need more specific advice.

Once you’ve cleaned up your site you should submit a reconsideration request that if successful will remove the warning label in our search results.

As always, if you have any questions or feedback, please tell us in the Webmaster Help Forum.

Posted by Oliver Barrett, Search Quality Team

Giving Tablet Users the Full-Sized Web

Webmaster level: All

Since we announced Google’s recommendations for building smartphone-optimized websites, a common question we’ve heard from webmasters is how to best treat tablet devices. This is a similar question Android app developers face, and for that the Building Quality Tablet Apps guide is a great starting point.

Although we do not have specific recommendations for building search engine friendly tablet-optimized websites, there are some tips for building websites that serve smartphone and tablet users well.

When considering your site’s visitors using tablets, it’s important to think about both the devices and what users expect. Compared to smartphones, tablets have larger touch screens and are typically used on Wi-Fi connections. Tablets offer a browsing experience that can be as rich as any desktop or laptop machine, in a more mobile, lightweight, and generally more convenient package. This means that, unless you offer tablet-optimized content, users expect to see your desktop site rather than your site’s smartphone site.

Our recommendation for smartphone-optimized sites is to use responsive web design, which means you have one site to serve all devices. If your website uses responsive web design as recommended, be sure to test your website on a variety of tablets to make sure it serves them well too. Remember, just like for smartphones, there are a variety of device sizes and screen resolutions to test.

Another common configuration is to have separate sites for desktops and smartphones, and to redirect users to the relevant version. If you use this configuration, be careful not to inadvertently redirect tablet users to the smartphone-optimized site too.

Telling Android smartphones and tablets apart

For Android-based devices, it’s easy to distinguish between smartphones and tablets using the user-agent string supplied by browsers: Although both Android smartphones and tablets will include the word “Android” in the user-agent string, only the user-agent of smartphones will include the word “Mobile”.

In summary, any Android device that does not have the word “Mobile” in the user-agent is a tablet (or other large screen) device that is best served the desktop site.

For example, here’s the user-agent from Chrome on a Galaxy Nexus smartphone:

Mozilla/5.0 (Linux; Android 4.1.1; Galaxy Nexus Build/JRO03O) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.166 Mobile Safari/535.19

Or from Firefox on the Galaxy Nexus:

Mozilla/5.0 (Android; Mobile; rv:16.0) Gecko/16.0 Firefox/16.0

Compare those to the user-agent from Chrome on Nexus 7:

Mozilla/5.0 (Linux; Android 4.1.1; Nexus 7 Build/JRO03S) AppleWebKit/535.19 (KHTML, like Gecko) Chrome/18.0.1025.166 Safari/535.19

Or from Firefox on Nexus 7:

Mozilla/5.0 (Android; Tablet; rv:16.0) Gecko/16.0 Firefox/16.0

Because the Galaxy Nexus’s user agent includes “Mobile” it should be served your smartphone-optimized website, while the Nexus 7 should receive the full site.

We hope this helps you build better tablet-optimized websites. As always, please ask on our Webmaster Help forums if you have more questions.

Posted by Pierre Far, Webmaster Trends Analyst, and Scott Main, lead tech writer for developer.android.com

A new tool to disavow links

Today we’re introducing a tool that enables you to disavow links to your site. If you’ve been notified of a manual spam action based on “unnatural links” pointing to your site, this tool can help you address the issue. If you haven’t gotten this notification, this tool generally isn’t something you need to worry about.

First, a quick refresher. Links are one of the most well-known signals we use to order search results. By looking at the links between pages, we can get a sense of which pages are reputable and important, and thus more likely to be relevant to our users. This is the basis of PageRank, which is one of more than 200 signals we rely on to determine rankings. Since PageRank is so well-known, it’s also a target for spammers, and we fight linkspam constantly with algorithms and by taking manual action.

If you’ve ever been caught up in linkspam, you may have seen a message in Webmaster Tools about “unnatural links” pointing to your site. We send you this message when we see evidence of paid links, link exchanges, or other link schemes that violate our quality guidelines. If you get this message, we recommend that you remove from the web as many spammy or low-quality links to your site as possible. This is the best approach because it addresses the problem at the root. By removing the bad links directly, you’re helping to prevent Google (and other search engines) from taking action again in the future. You’re also helping to protect your site’s image, since people will no longer find spammy links pointing to your site on the web and jump to conclusions about your website or business.

If you’ve done as much as you can to remove the problematic links, and there are still some links you just can’t seem to get down, that’s a good time to visit our new Disavow links page. When you arrive, you’ll first select your site.

One great place to start looking for bad links is the “Links to Your Site” feature in Webmaster Tools. From the homepage, select the site you want, navigate to Traffic > Links to Your Site > Who links the most > More, then click one of the download buttons. This file lists pages that link to your site. If you click “Download latest links,” you’ll see dates as well. This can be a great place to start your investigation, but be sure you don’t upload the entire list of links to your site — you don’t want to disavow all your links!

We would reiterate that we built this tool for advanced webmasters only. We don’t recommend using this tool unless you are sure that you need to disavow some links to your site and you know exactly what you’re doing.

Q: Will most sites need to use this tool?

A: No. The vast, vast majority of sites do not need to use this tool in any way. If you’re not sure what the tool does or whether you need to use it, you probably shouldn’t use it.

Q: If I disavow links, what exactly does that do? Does Google definitely ignore them?

A: This tool allows you to indicate to Google which links you would like to disavow, and Google will typically ignore those links. Much like with rel=”canonical”, this is a strong suggestion rather than a directive—Google reserves the right to trust our own judgment for corner cases, for example—but we will typically use that indication from you when we assess links.

Q: How soon after I upload a file will the links be ignored?

A: We need to recrawl and reindex the URLs you disavowed before your disavowals go into effect, which can take multiple weeks.

Q: Can this tool be used if I’m worried about “negative SEO”?

A: The primary purpose of this tool is to help clean up if you’ve hired a bad SEO or made mistakes in your own link-building. If you know of bad link-building done on your behalf (e.g., paid posts or paid links that pass PageRank), we recommend that you contact the sites that link to you and try to get links taken off the public web first. You’re also helping to protect your site’s image, since people will no longer find spammy links and jump to conclusions about your website or business. If, despite your best efforts, you’re unable to get a few backlinks taken down, that’s a good time to use the Disavow Links tool.

In general, Google works hard to prevent other webmasters from being able to harm your ranking. However, if you’re worried that some backlinks might be affecting your site’s reputation, you can use the Disavow Links tool to indicate to Google that those links should be ignored. Again, we build our algorithms with an eye to preventing negative SEO, so the vast majority of webmasters don’t need to worry about negative SEO at all.

Q: I didn’t create many of the links I’m seeing. Do I still have to do the work to clean up these links?

A: Typically not. Google normally gives links appropriate weight, and under normal circumstances you don’t need to give Google any additional information about your links. A typical use case for this tool is if you’ve done link building that violates our quality guidelines, Google has sent you a warning about unnatural links, and despite your best efforts there are some links that you still can’t get taken down.

Q: I uploaded some good links. How can I undo uploading links by mistake?

A: To modify which links you would like to ignore, download the current file of disavowed links, change it to include only links you would like to ignore, and then re-upload the file. Please allow time for the new file to propagate through our crawling/indexing system, which can take several weeks.

Q: Should I create a links file as a preventative measure even if I haven’t gotten a notification about unnatural links to my site?

A: If your site was affected by the Penguin algorithm update and you believe it might be because you built spammy or low-quality links to your site, you may want to look at your site’s backlinks and disavow links that are the result of link schemes that violate Google’s guidelines.

Q: If I upload a file, do I still need to file a reconsideration request?

A: Yes, if you’ve received notice that you have a manual action on your site. The purpose of the Disavow Links tool is to tell Google which links you would like ignored. If you’ve received a message about a manual action on your site, you should clean things up as much as you can (which includes taking down any spammy links you have built on the web). Once you’ve gotten as many spammy links taken down from the web as possible, you can use the Disavow Links tool to indicate to Google which leftover links you weren’t able to take down. Wait for some time to let the disavowed links make their way into our system. Finally, submit a reconsideration request so the manual webspam team can check whether your site is now within Google’s quality guidelines, and if so, remove any manual actions from your site.

Q: Do I need to disavow links from example.com and example.co.uk if they’re the same company?

A: Yes. If you want to disavow links from multiple domains, you’ll need to add an entry for each domain.

Q: What about www.example.com vs. example.com (without the “www”)?

A: Technically these are different URLs. The disavow links feature tries to be granular. If content that you want to disavow occurs on multiple URLs on a site, you should disavow each URL that has the link that you want to disavow. You can always disavow an entire domain, of course.

Q: Can I disavow something.example.com to ignore only links from that subdomain?

A: For the most part, yes. For most well-known freehosts (e.g. wordpress.com, blogspot.com, tumblr.com, and many others), disavowing “domain:something.example.com” will disavow links only from that subdomain. If a freehost is very new or rare, we may interpret this as a request to disavow all links from the entire domain. But if you list a subdomain, most of the time we will be able to ignore links only from that subdomain.

Posted by Jonathan Simon, Webmaster Trends Analyst

Make the web faster with mod_pagespeed, now out of Beta

If your page is on the web, speed matters. For developers and webmasters, making your page faster shouldn’t be a hassle, which is why we introduced mod_pagespeed in 2010. Since then the development team has been working to improve the functionality, quality and performance of this open-source Apache module that automatically optimizes web pages and their resources. Now, after almost two years and eighteen releases, we are announcing that we are taking off the Beta label.

We’re committed to working with the open-source community to continue evolving mod_pagespeed, including more, better and smarter optimizations and support for other web servers. Over 120,000 sites are already using mod_pagespeed to improve the performance of their web pages using the latest techniques and trends in optimization. The product is used worldwide by individual sites, and is also offered by hosting providers, such as DreamHost, Go Daddy and content delivery networks like EdgeCast. With the move out of beta we hope that even more sites will soon benefit from the web performance improvements offered through mod_pagespeed.

mod_pagespeed is a key part of our goal to help make the web faster for everyone. Users prefer faster sites and we have seen that faster pages lead to higher user engagement, conversions, and retention. In fact, page speed is one of the signals in search ranking and ad quality scores. Besides evangelizing for speed, we offer tools and technologies to help measure, quantify, and improve performance, such as Site Speed Reports in Google Analytics, PageSpeed Insights, and PageSpeed Optimization products. In fact, both mod_pagespeed and PageSpeed Service are based on our open-source PageSpeed Optimization Libraries project, and are important ways in which we help websites take advantage of the latest performance best practices.

To learn more about mod_pagespeed and how to incorporate it in your site, watch our recent Google Developers Live session or visit the mod_pagespeed product page.

Posted by Joshua Marantz and Ilya Grigorik, Google PageSpeed Team

Rich snippets guidelines

Webmaster level: All

Traditional, text-only, search result snippets aim to summarize the content of a page in our search results. Rich snippets (shown above) allow webmasters to help us provide even better summaries using structured data markup that they can add to their pages. Today we’re introducing a set of guidelines to help you implement high quality structured data markup for rich snippets.

Once you’ve correctly added structured data markup to you site, rich snippets are generated algorithmically based on that markup. If the markup on a page offers an accurate description of the page’s content, is up-to-date, and is visible and easily discoverable on your page and by users, our algorithms are more likely to decide to show a rich snippet in Google’s search results.

Alternatively, if the rich snippets markup on a page is spammy, misleading, or otherwise abusive, our algorithms are much more likely to ignore the markup and render a text-only snippet. Keep in mind that, while rich snippets are generated algorithmically, we do reserve the right to take manual action (e.g., disable rich snippets for a specific site) in cases where we see actions that hurt the experience for our users.

To illustrate these guidelines with some examples:

- If your page is about a band, make sure you mark up concerts being performed by that band, not by related bands or bands in the same town.

- If you sell products through your site, make sure reviews on each page are about that page’s product and not the store itself.

- If your site provides song lyrics, make sure reviews are about the quality of the lyrics, not the quality of the song itself.

In addition to the general rich snippets quality guidelines we’re publishing today, you’ll find usage guidelines for specific types of rich snippets in our Help Center. As always, if you have any questions or feedback, please tell us in the Webmaster Help Forum.

Posted by Jeremy Lubin, Consumer Experience Specialist, & Pierre Far, Webmaster Trends Analyst

Google Webmaster Guidelines updated

Webmaster level: All

Today we’re happy to announce an updated version of our Webmaster Quality Guidelines. Both our basic quality guidelines and many of our more specific articles (like those on links schemes or hidden text) have been reorganized and expanded to provide you with more information about how to create quality websites for both users and Google.

The main message of our quality guidelines hasn’t changed: Focus on the user. However, we’ve added more guidance and examples of behavior that you should avoid in order to keep your site in good standing with Google’s search results. We’ve also added a set of quality and technical guidelines for rich snippets, as structured markup is becoming increasingly popular.

We hope these updated guidelines will give you a better understanding of how to create and maintain Google-friendly websites.

Posted by Betty Huang & Eric Kuan, Google Search Quality Team

Keeping you informed of critical website issues

Webmaster level: All

Having a healthy and well-performing website is important, both to you as the webmaster and to your users. When we discover critical issues with a website, Webmaster Tools will now let you know by automatically sending an email with more information.

We’ll only notify you about issues that we think

have significant impact on your site’s health or search performance and

which have clear actions that you can take to address the issue. For

example, we’ll email you if we detect malware on your site or see a

significant increase in errors while crawling your site.

For most sites these kinds of issues will occur

rarely. If your site does happen to have an issue, we cap the number of

emails we send over a certain period of time to avoid flooding your inbox.

If you don’t want to receive any email from Webmaster Tools you can

change your email delivery

preferences.

We hope that you find this change a useful way to

stay up-to-date on critical and important issues regarding your site’s

health. If you

have any questions, please let us know via our

Webmaster

Help Forum.

Posted by John Mueller, Webmaster Trends Analyst, Google Zürich

Structured Data Testing Tool

Today we’re excited to share the launch of a shiny new version of the rich snippet testing tool, now called the structured data testing tool. The major improvements are:

- We’ve improved how we display rich snippets in the testing tool to better match how they appear in search results.

- The brand new visual design makes it clearer what structured data we can extract from the page, and how that may be shown in our search results.

- The tool is now available in languages other than English to help webmasters from around the world build structured-data-enabled websites.

Here’s what it looks like:

The new structured data testing tool works with all supported rich snippets and authorship markup, including applications, products, recipes, reviews, and others.

Try it yourself and, as always, if you have any questions or feedback, please tell us in the Webmaster Help Forum.

Written by Yong Zhu on behalf of the rich snippets testing tool team

Answering the top questions from government webmasters

Webmaster level: Beginner – Intermediate

Government sites, from city to state to federal agencies, are extremely important to Google Search. For one thing, governments have a lot of content — and government websites are often the canonical source of information that’s important to citizens. Around 20 percent of Google searches are for local information, and local governments are experts in their communities.

That’s why I’ve spoken at the National Association of Government Webmasters (NAGW) national conference for the past few years. It’s always interesting speaking to webmasters about search, but the people running government websites have particular concerns and questions. Since some questions come up frequently I thought I’d share this FAQ for government websites.

Question 1: How do I fix an incorrect phone number or address in search results or Google Maps?

Although managing their agency’s site is plenty of work, government webmasters are often called upon to fix problems found elsewhere on the web too. By far the most common question I’ve taken is about fixing addresses and phone numbers in search results. In this case, government site owners really can do it themselves, by claiming their Google+ Local listing. Incorrect or missing phone numbers, addresses, and other information can be fixed by claiming the listing.

Most locations in Google Maps have a Google+ Local listing — businesses, offices, parks, landmarks, etc. I like to use the San Francisco Main Library as an example: it has contact info, detailed information like the hours they’re open, user reviews and fun extras like photos. When we think users are searching for libraries in San Francisco, we may display a map and a listing so they can find the library as quickly as possible.

If you work for a government agency and want to claim a listing, we recommend using a shared Google Account with an email address at your .gov domain if possible. Usually, ownership of the page is confirmed via a phone call or post card.

Question 2: I’ve claimed the listing for our office, but I have 43 different city parks to claim in Google Maps, and none of them have phones or mailboxes. How do I claim them?

Use the bulk uploader! If you have 10 or more listings / addresses to claim at the same time, you can upload a specially-formatted spreadsheet. Go to www.google.com/places/, click the “Get started now” button, and then look for the “bulk upload” link.

If you run into any issues, use the Verification Troubleshooter.

Question 3: We’re moving from a .gov domain to a new .com domain. How should we move the site?

We have a Help Center article with more details, but the basic process involves the following steps:

- Make sure you have both the old and new domain verified in the same Webmaster Tools account.

- Use a 301 redirect on all pages to tell search engines your site has moved permanently.

- Don’t do a single redirect from all pages to your new home page — this gives a bad user experience.

- If there’s no 1:1 match between pages on your old site and your new site (recommended), try to redirect to a new page with similar content.

- If you can’t do redirects, consider cross-domain canonical links.

- Make sure to check if the new location is crawlable by Googlebot using the Fetch as Google feature in Webmaster Tools.

- Use the Change of Address tool in Webmaster Tools to notify Google of your site’s move.

- Have a look at the Links to Your Site in Webmaster Tools and inform the important sites that link to your content about your new location.

- We recommend not implementing other major changes at the same time, like large-scale content, URL structure, or navigational updates.

- To help Google pick up new URLs faster, use the Fetch as Google tool to ask Google to crawl your new site, and submit a Sitemap listing the URLs on your new site.

- To prevent confusion, it’s best to retain control of your old site’s domain and keep redirects in place for as long as possible — at least 180 days.

What if you’re moving just part of the site? This question came up too — for example, a city might move its “Tourism and Visitor Info” section to its own domain.

In that case, many of the same steps apply: verify both sites in Webmaster Tools, use 301 redirects, clean up old links, etc. In this case you don’t need to use the Change of Address form in Webmaster Tools since only part of your site is moving. If for some reason you’ll have some of the same content on both sites, you may want to include a cross-domain canonical link pointing to the preferred domain.

Question 4: We’ve done a ton of work to create unique titles and descriptions for pages. How do we get Google to pick them up?

First off, that’s great! Better titles and descriptions help users decide to click through to get the information they need on your page. The government webmasters I’ve spoken with care a lot about the content and organization of their sites, and work hard to provide informative text for users.

Google’s generation of page titles and descriptions (or “snippets”) is completely automated and takes into account both the content of a page as well as references to it that appear on the web. Changes are picked up as we recrawl your site. But you can do two things to let us know about URLs that have changed:

- Submit an updated XML Sitemap so we know about all of the pages on your site.

- In Webmaster Tools, use the Fetch as Google feature on a URL you’ve updated. Then you can choose to submit it to the index.

- You can choose to submit all of the linked pages as well — if you’ve updated an entire section of your site, you might want to submit the main page or an index page for that section to let us know about a broad collection of URLs.

Question 5: How do I get into the YouTube government partner program?

For this question, I have bad news, good news, and then even better news. On the one hand, the government partner program has been discontinued. But don’t worry, because most of the features of the program are now available to your regular YouTube account. For example, you can now upload videos longer than 10 minutes.

Did I say I had even better news? YouTube has added a lot of functionality useful for governments in the past year:

- You can now broadcast live streaming video to YouTube via Hangouts On Air (requires a Google+ account).

- You can link your YouTube account with your Webmaster Tools account, making it the “official channel” for your site.

- Automatic captions continue to get better and better, supporting more languages.

I hope this FAQ has been helpful, but I’m sure I haven’t covered everything government webmasters want to know. I highly recommend our Webmaster Academy, where you can learn all about making your site search-engine friendly. If you have a specific question, please feel free to add a question in the comments or visit our really helpful Webmaster Central Forum.

Posted by Jason Morrison, Search Quality Team